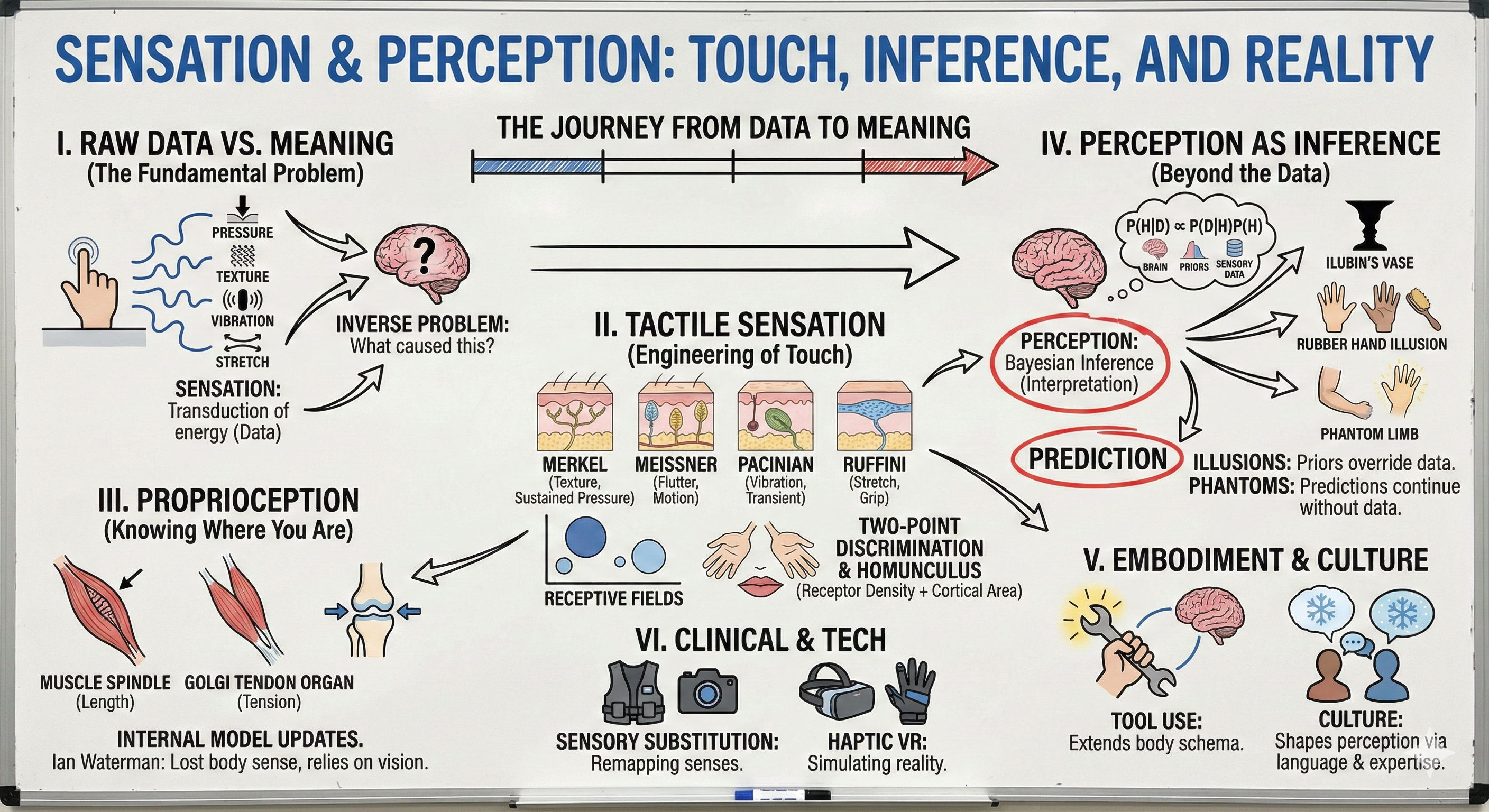

Sensation and Perception: Touch, Inference, and Reality

• Sensation vs. perception distinction

• Inverse problem and inference

• Helmholtz's unconscious inference

II. Tactile Sensation: The Engineering of Touch (20 min)

• Four receptor types, four sensations

• Receptive fields and spatial resolution

• Two-point discrimination and the homunculus

• Rapidly vs. slowly adapting receptors

III. Proprioception: Knowing Where You Are (12 min)

• Muscle spindles and Golgi tendon organs

• Joint receptors and position sense

• The man who lost his body (Ian Waterman)

IV. Perception as Inference: Beyond the Data (18 min)

• Bayesian brain hypothesis

• Illusions as perception successes

• Rubber hand illusion and body ownership

• Phantom limbs and predictive processing

V. Embodiment and Culture (10 min)

• Extended cognition and tool use

• Cultural differences in perception

• Inuit snow perception and expertise

VI. Clinical and Technological Applications (5 min)

• Tactile sensory substitution devices

• Haptic interfaces and VR embodiment

Your brain doesn't sense the world—it infers it. Right now, your fingers touching this page activate four types of specialized receptors, each firing at different rates to encode pressure, texture, vibration, and stretch. But that raw data tells you nothing about what you're touching. Your brain must solve the inverse problem: given these spike patterns, what object in the world caused them? This is perception—an act of inference under uncertainty. Today we'll discover why your brain hallucinates reality from fragmentary sensory data, why phantom limbs feel real, why the rubber hand illusion works, and why you can't tickle yourself. Through the lens of touch and proprioception, we'll understand that perception is not a camera recording the world but a scientist testing hypotheses against sensory evidence. Every sensation is a question; every perception is your brain's best answer.

Opening: The Hard Problem of Perception

[VIEW IMAGES: Hermann von Helmholtz and his theory of unconscious inference in perception]

Close your eyes and run your finger across this desk. You feel wood, smooth and cool, solid and unyielding. But here's what actually happened: Four types of mechanoreceptors in your fingertip deformed, opening ion channels that generated action potentials. These spikes traveled up your arm at 50 meters per second, entered your spinal cord, crossed to the opposite side, ascended through the brainstem, synapsed in the thalamus, and finally reached your somatosensory cortex. What your brain received was a pattern of electrical impulses—nothing more. Yet somehow, from this code of spikes, you constructed the rich, immediate experience of wood against skin. This transformation from sensation to perception is neuroscience's central mystery.

Hermann von Helmholtz recognized this problem in 1867. He noted that the same pattern of retinal stimulation could be caused by infinitely many different arrangements of objects in the world. A small bright circle on your retina could be a distant spotlight or a nearby flashlight or the moon. The sensory data is fundamentally ambiguous—this is the inverse problem. Your brain must infer backward from effects (sensory signals) to causes (objects in the world). Helmholtz proposed that perception is unconscious inference—your brain uses prior knowledge and expectations to make educated guesses about what caused the sensory input. You don't consciously deliberate about whether that circle is the moon; your perceptual system automatically selects the most likely interpretation given the context.

This framework revolutionizes how we think about sensation and perception. Sensation is the transduction of physical energy into neural signals—it's what your receptors do. Perception is the interpretation of those signals to construct a model of the external world—it's what your brain does. Sensation is bottom-up, driven by incoming data. Perception is interactive, shaped by both data and top-down predictions. The distinction matters because perception can diverge from sensation—your brain can perceive things that weren't sensed (hallucinations) or fail to perceive things that were sensed (inattentional blindness). What you experience is not the world itself but your brain's best guess about the world.

Touch offers the clearest window into this process because tactile receptors are the simplest sensory organs. We can record from single receptors, map their properties precisely, and trace their projections to cortex. The machinery of touch is beautifully engineered, yet even with complete knowledge of the receptors, we cannot predict what you will perceive. The gap between sensation and perception is where the brain does its work—where spikes become meaning, where data becomes experience. Today we'll cross that gap.

The Four Channels of Touch

Mechanoreceptors: Four Types, Four Jobs

[VIEW IMAGES: The four types of cutaneous mechanoreceptors in glabrous (hairless) skin]

Your fingertip contains four distinct types of mechanoreceptors, each specialized for different aspects of touch. Merkel complexes sit in the superficial layers of skin, close to the surface, with small receptive fields about 2-3 mm in diameter. They respond to sustained pressure and fine spatial details—these are your texture detectors. When you run your finger across a surface reading Braille, Merkel receptors are doing most of the work. They adapt slowly, continuing to fire as long as the stimulus is present, providing a sustained signal about object shape and texture.

Meissner corpuscles also occupy superficial skin but respond to light touch and low-frequency vibration (20-50 Hz)—this is flutter. They have small receptive fields and adapt rapidly, responding strongly to stimulus onset and offset but falling silent during sustained pressure. When an ant walks across your arm, Meissner corpuscles track its movements. They're motion detectors for slow movements across the skin. Their rapid adaptation means they're exquisitely sensitive to change but blind to static pressure—you stop feeling your clothes minutes after putting them on because Meissner corpuscles have adapted.

Pacinian corpuscles lie deep in the dermis and subcutaneous tissue, with large receptive fields spanning several centimeters. They respond to high-frequency vibration (100-300 Hz) and adapt so rapidly they only fire to stimulus transients. When you grip a vibrating phone or feel a car engine rumble, Pacinian corpuscles encode the vibration. Their beautiful onion-like lamellae act as a high-pass filter, mechanically attenuating low frequencies while transmitting high frequencies to the nerve terminal. This is engineering at the cellular level—the receptor's structure determines its function.

Ruffini endings are the least understood. Located in deep skin and responsive to skin stretch, they adapt slowly and have large receptive fields. They signal sustained pressure and lateral skin stretch—important for grip control and hand conformation. When you grasp a coffee cup, Ruffini endings help you maintain appropriate grip force. They're also temperature sensitive, firing more rapidly when warmed. Unlike the other three types, Ruffini endings seem to integrate multiple stimulus dimensions—pressure, stretch, and temperature—making them multimodal mechanoreceptors.

Ascending Pathways: The Journey to Consciousness

From Fingertip to Cortex: Two Roads Diverged

[VIEW IMAGES: The dorsal column-medial lemniscal pathway anatomy and organization]

When a mechanoreceptor in your fingertip fires, that signal must travel half a meter to reach your brain. But this isn't a simple cable transmission—the signal is processed, filtered, and routed through multiple stations. The primary pathway for fine touch, vibration, and proprioception is the dorsal column-medial lemniscal system, one of the brain's fastest and most precise information highways. The sensory axon enters the spinal cord through the dorsal root, but instead of synapsing immediately, it ascends ipsilaterally (same side) in the dorsal columns—the gracile fasciculus carrying information from the lower body, the cuneate fasciculus carrying information from the upper body.

These axons maintain somatotopic organization as they ascend, creating a map of the body surface encoded in the spatial arrangement of fibers. They project all the way to the medulla, where they finally make their first synapse in the dorsal column nuclei—the gracile nucleus and cuneate nucleus. This direct projection preserves temporal precision, essential for detecting vibration and rapid changes in tactile stimulation. At the medulla, second-order neurons cross the midline in the medial lemniscus, which is why damage to the right cortex affects sensation on the left side of your body. The crossover (decussation) occurs here, low in the brainstem.

From the medulla, the medial lemniscal fibers ascend through the pons and midbrain to the ventral posterior lateral (VPL) nucleus of the thalamus—the critical relay station for all conscious sensory perception. The thalamus is not a passive relay but an active filter, modulating which signals reach cortex based on behavioral state, attention, and arousal. During sleep, thalamic neurons shift to burst mode, blocking sensory transmission—this is why you don't consciously perceive most sensory input while sleeping. From thalamus, third-order neurons project to primary somatosensory cortex (S1) in the postcentral gyrus, where conscious tactile perception emerges. The entire journey from fingertip to cortex takes about 20-30 milliseconds.

The Cerebellar Branch: Unconscious Body Tracking

[VIEW IMAGES: Spinocerebellar pathways and cerebellar role in motor coordination]

But not all sensory information needs to reach consciousness for action. As dorsal column fibers ascend the spinal cord, branches (collaterals) feed proprioceptive information into the spinocerebellar tracts, which project to the cerebellum—the brain's movement coordinator. The dorsal spinocerebellar tract carries information about muscle length and tension from muscle spindles and Golgi tendon organs, primarily from the lower limbs. These signals enter the cerebellum through the inferior cerebellar peduncle, providing real-time feedback about body position and movement that never reaches conscious awareness.

The cerebellum uses this proprioceptive stream to compare intended movements (motor commands descending from cortex) with actual movements (sensory feedback ascending from periphery). When they mismatch—when your hand doesn't move as expected—the cerebellum generates error signals that adjust motor output. This happens entirely unconsciously, at timescales too fast for conscious control. When you reach for a coffee cup and someone moves it mid-reach, your cerebellum detects the mismatch between predicted and actual proprioceptive feedback and corrects your trajectory before you consciously register the cup's movement. This is online motor control, and it requires a dedicated proprioceptive pipeline to the cerebellum.

The cuneocerebellar tract serves the same function for the upper limbs, and the ventral spinocerebellar tract carries information about spinal interneuron activity—essentially monitoring the motor commands themselves. The cerebellum receives a copy of what the spinal cord is doing (efference copy) and compares it to sensory feedback about what the body actually did (reafference). The difference is prediction error, which drives motor learning. When you learn a new motor skill—playing piano, typing, juggling—your cerebellum is learning to predict the sensory consequences of motor commands. With practice, predictions become accurate, and movements become smooth and automatic.

The Anterolateral System: Temperature and Crude Touch

A second ascending pathway, the anterolateral system (also called the spinothalamic tract), carries information about temperature, itch, and crude touch—sensations that don't require fine spatial or temporal precision. Unlike the dorsal column system, sensory neurons in the anterolateral pathway synapse immediately upon entering the spinal cord. Second-order neurons cross the midline within a few segments and ascend in the anterior and lateral white matter of the spinal cord. This pathway is slower (signals travel at 10-20 m/s compared to 50-70 m/s in the dorsal columns) and less spatially precise, but it's adequate for detecting temperature changes and coarse pressure.

The existence of two parallel pathways reveals an engineering principle: different sensory tasks require different neural implementations. Fine touch discrimination—reading Braille, manipulating small objects—requires fast conduction, precise timing, and detailed spatial maps, hence the direct dorsal column pathway. Temperature detection and crude touch don't require such precision; slower, shorter, multiply-synapsing pathways suffice. Clinical neurology exploits this separation: specific spinal cord lesions can selectively damage one pathway while sparing the other, causing dissociated sensory loss where patients lose fine touch but retain temperature sensation, or vice versa.

Spatial Resolution and the Cortical Homunculus

Two-Point Discrimination: Not All Skin Is Created Equal

Take two sharp pencil points and touch them to your fingertip, separated by 2 millimeters. You'll feel two distinct points. Now touch them to your back with the same 2 mm separation. You'll feel only one point. This is the two-point discrimination threshold, and it varies dramatically across your body—2 mm on fingertips, 30 mm on the back, 40 mm on the thigh. The difference reflects receptor density. Your fingertips have about 240 Merkel receptors per square centimeter; your back has about 15. More receptors means finer spatial resolution, the ability to distinguish nearby touch points.

But receptor density alone doesn't determine perceptual acuity. The cortical representation matters even more. Wilder Penfield's somatosensory homunculus, mapped through direct cortical stimulation during neurosurgery in the 1950s, revealed that cortical area dedicated to each body part correlates with its sensory importance, not its physical size. Your hands and face, with their rich tactile discrimination, occupy more cortical territory than your entire torso and legs combined. The homunculus is grotesque—a tiny body with enormous hands, lips, and tongue—but it reflects functional demands. You manipulate the world with your hands and explore it with your mouth; your brain allocates processing resources accordingly.

The somatosensory cortex is organized somatotopically—neighboring body parts map to neighboring cortical regions. But this map is not fixed. In people who lose a limb, the cortical territory for that limb gets invaded by neighboring representations. Amputees often experience referred sensations—touching the face evokes sensations in the phantom hand because the face representation has expanded into the vacant hand area. London taxi drivers show enlarged hippocampal representations of spatial layout; blind Braille readers show enlarged finger representations. The cortical map is plastic, shaped by experience and use.

This plasticity creates a puzzle. If the map changes with experience, how do you maintain a stable sense of your body? The answer is that perception combines two sources of information: the sensory input (where touch occurred) and the body schema (your brain's model of body structure). When these conflict, strange perceptions arise. The rubber hand illusion, which we'll explore shortly, demonstrates that your sense of body ownership is not hardwired to your actual body—it's a flexible inference that can be transferred to external objects under the right conditions.

Proprioception: The Hidden Sense

Knowing Where Your Body Is Without Looking

Close your eyes and touch your nose. Easy. Now consider what's required: you must know where your nose is in space and where your hand is in space, then plan and execute a movement that brings them together. But you never sense your body position directly—you infer it from three sources: muscle spindles that signal muscle length, Golgi tendon organs that signal muscle tension, and joint receptors that signal joint angle. These proprioceptors constantly update your brain about body configuration, creating a dynamic internal model of your physical self.

Muscle spindles are stretch receptors embedded in skeletal muscles, running parallel to the main muscle fibers. They contain specialized intrafusal fibers wrapped by two types of sensory endings: annulospiral endings that respond to both the length and rate of change of muscle stretch, and flower-spray endings that respond mainly to sustained stretch. When your biceps lengthens as you extend your arm, spindles in the biceps increase their firing rate, signaling arm extension. This is primary proprioception—direct sensing of body position and movement.

Golgi tendon organs sit at muscle-tendon junctions, woven into the collagen fibers. They measure muscle tension—the force your muscle is generating. Unlike spindles, which respond to passive stretch, Golgi organs respond to active muscle contraction. They're safety devices, providing negative feedback when muscle tension becomes dangerously high. When you grip something with maximum force, Golgi organs can trigger reflex inhibition, preventing muscle or tendon damage. But they also provide crucial information about load—whether that coffee cup is full or empty.

Joint receptors—Pacinian corpuscles, Ruffini endings, and Golgi-like organs in joint capsules and ligaments—were once thought to be the primary source of position information. But experiments in the 1970s showed that joint receptors mostly fire at the extremes of joint range, not throughout the range. Your sense of elbow angle when your arm is halfway extended doesn't come from joint receptors. Instead, it comes from integrating muscle spindle signals from flexors and extensors. The brain uses a distributed code—position emerges from the pattern of signals across multiple receptor types, not from any single source.

Perception as Bayesian Inference

The Brain as Scientist

Your brain faces a fundamental problem: sensory data is always ambiguous and noisy. The same receptor activation pattern could be caused by many different stimuli. How does your brain decide what's really out there? The answer, formalized by modern computational neuroscience, is Bayesian inference. Your brain combines two sources of information: the sensory likelihood (how likely is this receptor pattern given different possible stimuli?) and the prior probability (how likely is each stimulus based on past experience?). The perception you experience is the stimulus that maximizes the posterior probability—the best explanation given both the current data and your prior knowledge.

This Bayesian framework explains many perceptual phenomena. When sensory data is ambiguous, your perception is dominated by priors—you see what you expect to see. When sensory data is strong and clear, perception tracks the data, overriding expectations. Most perception lies between these extremes, reflecting a weighted combination of data and expectation. The weights depend on uncertainty: when you're confident in your expectations (strong prior) and uncertain about the data (noisy signal), priors dominate. When you're uncertain about what to expect (weak prior) but confident in the data (clear signal), data dominates.

Illusions are not failures of perception—they're successes. They reveal the priors your brain uses to interpret ambiguous data. The Müller-Lyer illusion (two lines with inward or outward pointing arrows at their ends) causes you to misperceive line length because your visual system uses a prior that inward-pointing angles typically indicate receding edges (like room corners) while outward-pointing angles indicate approaching edges. Your brain applies a depth correction, making the "receding" line appear longer. The illusion works even when you know it's an illusion because the inference is mandatory, not conscious. Your perceptual system is locked into using these priors because they're usually correct in natural scenes.

The rubber hand illusion demonstrates that body ownership is an inference, not a fixed property. The setup is simple: your real hand is hidden, a rubber hand is placed in view, and an experimenter strokes both hands synchronously. Within minutes, most people report feeling the touch on the rubber hand and feeling that the rubber hand is their own hand. The illusion works because your brain infers body ownership from three cues: visual appearance (looks like a hand), spatial location (near where your hand should be), and multisensory synchrony (vision and touch correlate). When these cues align, even for a rubber hand, your brain concludes "this is my hand." The inference overrides the truth.

Phantom Limbs and Predictive Processing

When the Brain Predicts What Isn't There

Approximately 80% of amputees experience phantom limbs—vivid sensations that the missing limb is still present, often in specific positions and sometimes in pain. Vilayanur Ramachandran's work with phantom limbs revealed that these aren't merely memories but active perceptual constructions. Some patients feel their phantom hand clenched in a painful fist. Ramachandran invented mirror therapy: the patient places their intact hand in a mirror box so that its reflection appears where the phantom limb should be. When they open and close the real hand while watching the mirror, many patients feel their phantom hand unclenching, relieving years of phantom pain. Vision overrode the phantom sensation because visual evidence is usually reliable.

The predictive processing framework explains phantom limbs elegantly. Your brain maintains a hierarchical generative model that predicts sensory input at every level. Higher areas generate predictions about what lower areas should be sensing; lower areas send prediction errors upward when reality doesn't match predictions. Perception is the process of minimizing prediction error—updating the model until predictions match sensory data. But when a limb is amputated, the high-level body model hasn't updated. It still predicts that the limb exists and sends motor commands to it. The lack of sensory feedback is interpreted as continued limb presence, not absence. The phantom is your brain's prediction in the absence of contradictory data.

[VIEW IMAGES: Ramachandran's mirror box therapy for phantom limb pain treatment]

This raises a profound question: how much of your normal body perception is similarly predictive? When you feel your foot without looking at it, is that sensation driven by actual sensory input or by your brain's prediction that your foot is there? The answer is both. Under normal conditions, predictions and sensory data agree, so you can't distinguish them. But when they conflict—as in the rubber hand illusion or phantom limbs—you discover that perception is not passive reception but active inference. Your brain is constantly hallucinating your body; it just usually hallucinates correctly because it has accurate data to constrain the hallucination.

You can't tickle yourself because your brain predicts the sensory consequences of your own actions and subtracts the prediction from actual sensation. When you move your finger across your skin, your motor system sends an efference copy—a prediction of the sensory feedback that movement will generate—to sensory areas. The actual sensory input is compared to the prediction, and only the difference (prediction error) generates conscious sensation. Since your prediction is accurate, the net sensation is minimal. But when someone else tickles you, there's no prediction, so the full sensory signal reaches awareness. This is sensory attenuation—your brain filters out expected sensations to highlight the unexpected. It's another example of perception as inference, not passive recording.

Embodiment, Tools, and Extended Cognition

Where Does Your Body End?

When a skilled tennis player uses a racket, do they feel the ball hitting the racket strings or hitting their hand? Studies of tool use reveal that your brain incorporates tools into your body schema. After using a tool for just minutes, the receptive fields of neurons in your parietal cortex expand to include the tool. The tool becomes, functionally, part of your body. Blind individuals using canes report feeling not the cane vibrating in their hand but the texture of the ground at the cane tip. The brain remaps tactile sensation from hand to tool tip—perceptually extending the body boundary.

This embodiment of tools happens rapidly and automatically. In experiments where participants reach for objects with a mechanical grabber, their perceived arm length increases to include the grabber within seconds. When the grabber is removed, arm length perception returns to normal within minutes. The body schema is not a fixed anatomical representation but a flexible tool for action—it represents "what I can reach and manipulate" rather than "what is biologically me." This makes evolutionary sense: stone tools have been part of human action for 3 million years. A brain that could rapidly incorporate tools into body representation would have enormous advantages.

The extended cognition hypothesis pushes this further, arguing that cognitive processes literally extend into the environment. When you perform arithmetic with pencil and paper, is the paper just external storage or is it part of your cognitive system? Andy Clark and David Chalmers argue that the paper is functionally equivalent to internal working memory—it serves the same computational role. The cognitive process is distributed across brain and paper. Similarly, your smartphone is arguably part of your cognitive system—you offload memory storage and retrieval to it, and its absence impairs your cognitive performance just as brain lesions would.

[SEARCH: "tool use body schema neural plasticity 2024" - explore how the brain incorporates tools]

Cultural Differences in Perception

Expertise, Language, and Perceptual Reality

The famous Inuit snow example is often overstated—they don't have hundreds of words for snow—but the underlying principle is real: expertise and language shape perceptual discrimination. Wine experts genuinely perceive more distinctions in wine flavors than novices; their sensory systems haven't changed, but their perceptual categorization has. They've learned to attend to subtle features and have language to encode the distinctions. This isn't just better memory—it's different perception. Brain imaging shows that experts recruit more neural resources during perceptual tasks in their domain of expertise.

Cross-cultural studies reveal that perceptual illusions vary in strength across cultures. The Müller-Lyer illusion is weaker in people from cultures with round dwellings rather than rectangular architecture, suggesting that the prior expectations shaping the illusion are learned from environmental statistics. The Ebbinghaus illusion (size perception influenced by surrounding context) varies with cultural emphasis on holistic versus analytic processing. East Asian participants show stronger context effects than Western participants, correlating with cultural differences in attentional focus and social cognition.

Indigenous tracking skills demonstrate how cultural practice shapes perception. Expert trackers perceive information in sand, mud, or vegetation that is literally invisible to untrained observers. They're not seeing different photons—the sensory input is identical—but their perceptual system has learned different features and patterns to extract. This expertise develops through years of practice and cultural transmission. The relevant knowledge cannot be fully articulated in language; it's embodied in perceptual skill. This challenges the notion that perception is purely bottom-up. Culture shapes perception through attention, expectation, and learned patterns of feature extraction.

Clinical and Technological Applications

Sensory Substitution and Haptic Interfaces

Sensory substitution devices translate one sensory modality into another, typically for people with sensory loss. The original device, invented by Paul Bach-y-Rita in 1969, converted camera images into tactile stimulation patterns on the back. Blind users learned to "see" through touch, perceiving objects, motion, and even perspective. After hours of training, users reported that perception shifted from "patterns on my back" to "objects in space out there"—a genuine perceptual remapping. Modern versions use tongue stimulation, which offers higher resolution than back skin, allowing users to read text and navigate obstacles.

The success of sensory substitution reveals that the brain's perceptual machinery is modality-independent. What matters is not whether information enters through eyes or skin but whether it carries the relevant relational structure. The visual system extracts spatial relationships; the tactile system can provide those same relationships. The brain discovers the correlations between sensory input and motor actions, learning that moving the camera rightward shifts the tactile pattern leftward, just as eye movements shift visual input. This learning constructs a perceptual space that feels external and visual, even though the input is tactile.

Haptic interfaces in virtual reality face the challenge of simulating touch realistically. Current VR controllers use vibration motors for basic feedback, but this captures only Pacinian-type high-frequency information. Simulating texture (Merkel), flutter (Meissner), and skin stretch (Ruffini) requires more sophisticated actuators. Emerging technologies use ultrasound to create mid-air tactile sensations and skin stretch devices to simulate object surfaces. The goal is to create complete somatosensory immersion, where virtual objects feel as real as physical ones. The rubber hand illusion suggests this is achievable—if multisensory signals correlate appropriately, the brain will infer that virtual objects are real.

[VIEW IMAGES: Modern sensory substitution devices translating vision into tactile patterns]

Synthesis: Building Reality from Spikes

We've traced perception from mechanoreceptor to phenomenology. The journey reveals that perception is not passive reception but active construction—an inference under uncertainty. Your four types of tactile receptors encode pressure, texture, vibration, and stretch. Proprioceptors encode body position and movement. But these signals are meaningless until interpreted. Your brain maintains generative models that predict sensory input and updates those models to minimize prediction error. What you perceive is not the raw sensory data but the brain's best explanation of what's causing the data—constrained by both current input and prior expectations built from lifetime experience.

This framework explains phenomena that puzzled earlier theories. Illusions arise when priors override data. Phantom limbs arise when predictions continue in the absence of data. The rubber hand illusion works because inference from multisensory correlation overrides anatomical reality. Tool incorporation happens because body schema represents functional reach, not anatomical extent. Cultural differences in perception arise because priors are learned from environmental statistics that vary across cultures. In every case, perception is inference—hypothesis testing constrained by data.

The engineering constraints we identified in earlier chapters—energy budgets, noise, speed—all shape perceptual inference. Prediction reduces energy cost by processing only errors, not full signals. Prior expectations provide noise rejection, maintaining stable perception despite noisy sensors. Rapid adaptation filters unchanging stimuli, focusing processing on changes that might require action. These aren't bugs but features—optimal solutions to the problem of building reliable perception from unreliable components in a resource-constrained system.

Understanding sensation and perception isn't just academic curiosity—it's essential for understanding neurological disorders where perception fails. Phantom limb pain, complex regional pain syndrome, focal dystonia, and many psychiatric conditions involve disrupted perceptual inference. Restoring normal perception requires understanding how the brain constructs reality from data. And as we build artificial systems—prosthetics, sensory substitution devices, virtual reality—we must respect the inferential nature of perception. It's not enough to provide sensory data; we must provide data structured in ways that support accurate inference. The brain is a scientist testing hypotheses. Our job is to provide evidence worth testing.

Thought Questions for Discussion

The Reality Question: If perception is always inference—never direct access to reality—how can we know whether our perceptual inferences are accurate? What makes some inferences better than others? Consider that illusions are systematic errors shared across people, while hallucinations are idiosyncratic errors unique to individuals. What does this tell us about the relationship between perception and objective reality?

The Embodiment Puzzle: If your brain can incorporate tools into your body schema within minutes, what are the limits of embodiment? Could you incorporate a car, feeling the road through its tires? Could you incorporate a robot avatar, feeling touch through its sensors? What would happen to your sense of self if your body schema became completely flexible and could be transferred to any physical system?

The Cultural Perception Problem: If culture shapes perception through learned priors and attention patterns, are people from different cultures literally perceiving different realities? Does this mean objective perceptual truth doesn't exist, or does it mean that different perceptual strategies can all be valid ways of extracting true information from the world? How do we resolve perceptual disagreements when they might reflect different but equally valid perceptual inferences?

Practice Questions:

• The four types of mechanoreceptors are Merkel complexes (texture), Meissner corpuscles (_______), Pacinian corpuscles (high-frequency _______), and Ruffini endings (_______).

• Two-point discrimination threshold is small (~2 mm) on fingertips because receptor _______ is high and cortical _______ is large.

• Muscle spindles measure muscle _______, while Golgi tendon organs measure muscle _______.

• Ian Waterman lost all _______ and _______ sensation from the neck down, requiring visual feedback to control movement.

• Bayesian inference combines sensory _______ (data) with _______ probability (expectations) to generate perception.

• The rubber hand illusion works because the brain infers body ownership from visual appearance, spatial location, and _______ synchrony.

• Phantom limbs arise because high-level _______ continue predicting limb presence despite absence of sensory feedback.

• You can't tickle yourself because your brain generates an _______ copy that predicts and subtracts expected sensory feedback.

📝 View Answer Key for Chapter 6

[PREVIEW: Visual perception, predictive processing, and the neuroscience of seeing]