Vision: The Brain's Beautiful Lie

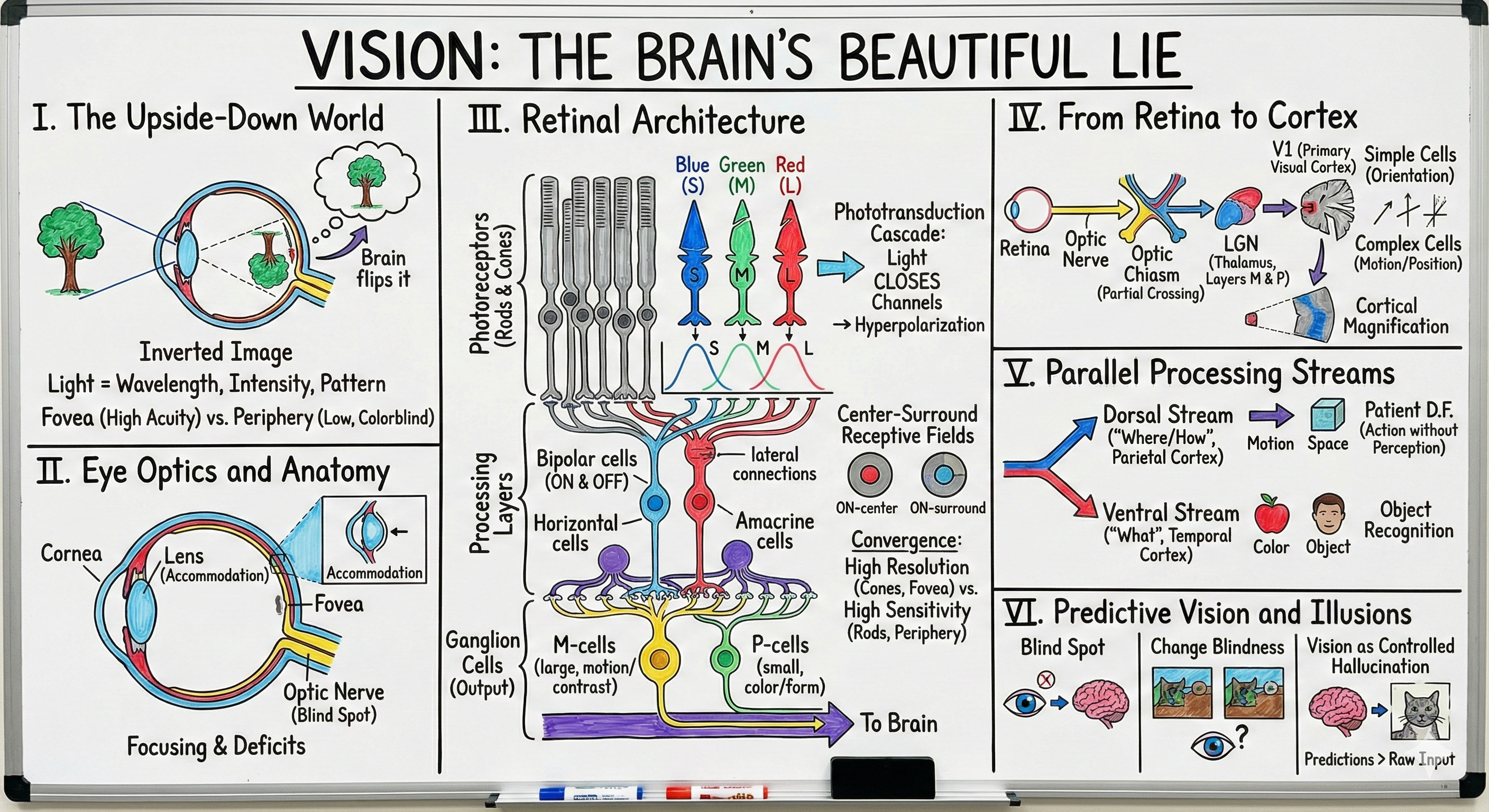

• The inverted image problem and Hermann von Helmholtz

• Light as information carrier: wavelength, intensity, spatial pattern

• Regional specialization: fovea vs periphery

II. Eye Optics and Anatomy (10 min)

• Cornea, lens, and accommodation

• Visual field deficits and clinical diagnosis

• The blind spot you never notice

III. Retinal Architecture (20 min)

• Rods vs cones: scotopic vs photopic vision

• Phototransduction cascade: light closes channels

• Center-surround receptive fields

• Convergence and the resolution-sensitivity trade-off

IV. From Retina to Cortex (15 min)

• M and P ganglion cell pathways

• Lateral geniculate nucleus architecture

• Primary visual cortex: simple and complex cells

• Retinotopy and cortical magnification

V. Parallel Processing Streams (12 min)

• Ventral "what" pathway: object and color

• Dorsal "where" pathway: motion and space

• Patient D.F. and visual form agnosia

VI. Predictive Vision and Illusions (8 min)

• Your brain fills in the blind spot

• Change blindness and inattentional blindness

• Vision as controlled hallucination

Right now, you believe you're seeing the world. You're not. Your retinas receive an inverted, blurred, two-dimensional projection with a hole in the middle, sampled by cells that can't tell you what color anything is unless you're looking directly at it. This raw data doesn't reach your consciousness—it's processed by at least ten layers of neurons before the first signals leave your eye, then filtered through the thalamus, reorganized across multiple cortical areas, and finally assembled into the vivid, stable, three-dimensional perception you call "sight." Today we'll trace the 100-millisecond journey from photon to perception, discovering why your peripheral vision is colorblind, how your fovea packs more neurons than some entire brains, why you're blind for 40 minutes every day during eye movements, and how your visual cortex dedicates more processing power to your thumbnail at arm's length than to your entire peripheral world. You'll learn that the blind spot you never notice is being filled in with hallucinated content, that motion and color are computed in entirely different brain regions that can break independently, and that what you see has more to do with what your brain expects than what your eyes report. Vision isn't about seeing reality—it's about constructing a useful fiction that keeps you alive.

Opening: The Upside-Down Problem

[VIEW IMAGES: Inverted retinal image diagram showing how the lens flips the world upside down]

Close your right eye. Now look straight ahead with your left eye and slowly move your left hand from the far left side of your vision toward the center while wiggling your fingers. Keep looking straight ahead—don't cheat by looking at your hand. Your fingers will disappear when your hand reaches about 15 degrees left of center. You've just found your blind spot, a hole in your vision the size of 13 full moons where your optic nerve exits the retina and no photoreceptors exist. Yet you never notice this enormous gap because your brain fills it in with hallucinated content matching the surroundings. This isn't a metaphor—your visual cortex is literally inventing visual information for a part of the world you cannot see.

[VIEW IMAGES: Blind spot demonstrations you can try]

The inverted retinal image posed an even deeper puzzle. Hermann von Helmholtz, the German physicist and physician who dominated 19th-century sensory physiology, understood that the cornea and lens project an upside-down, reversed image onto the retina—just like a camera obscura. Top becomes bottom, left becomes right. Yet we perceive the world right-side up. Helmholtz proposed that vision is an act of "unconscious inference"—the brain doesn't passively receive images but actively interprets them based on experience and expectation. In 1896, George Stratton tested this idea by wearing inverting goggles that flipped his visual world. After several days of nauseating disorientation, his brain adapted, and the world appeared normal again despite his retinas still receiving inverted images. When he removed the goggles, he had to readapt to normal vision. The brain doesn't care what the raw input looks like—it learns whatever mapping keeps behavior functional.

[SEARCH: "George Stratton inverting goggles experiment" - see how vision adapts to inverted input]

Vision begins with light, electromagnetic radiation with wavelengths between 400 and 700 nanometers. This narrow slice of the electromagnetic spectrum carries information in three dimensions: wavelength encodes color, intensity encodes brightness, and spatial pattern encodes form. But the eye doesn't passively capture this information like a camera. The human retina contains approximately 130 million photoreceptors but only 1.2 million ganglion cells sending information to the brain. This 100:1 compression ratio means that 99% of the computation happens in the retina itself—vision is an act of data reduction before signals even leave the eye.

The visual field, the total area you can see without moving your eyes, is dramatically non-uniform. Hold your arm straight out and look at your thumbnail. That tiny patch, subtending about 2 degrees of visual angle, is processed by nearly half of your primary visual cortex. This is your fovea, a specialized pit in the center of your retina packed with cone photoreceptors at a density of 200,000 per square millimeter. This region delivers the high-acuity color vision you assume extends across your entire visual world. It doesn't. Everything more than 5 degrees from the center—95% of your visual field—is peripheral vision, with progressively worse acuity and color sensitivity. You're essentially color-blind in your periphery and don't realize it because you never look there without moving your fovea to it.

[VIEW IMAGES: Foveal structure and cone photoreceptor density]

[READ: The Human Visual System: Structure and Function - comprehensive review]

The Eye as Optical Instrument

Optics and Image Formation

[VIEW IMAGES: Cross-section of human eye showing optical components and light path]

The eye is a beautifully engineered optical instrument evolved to balance conflicting constraints: maximum light gathering, minimum aberration, compact size, and variable focus. The cornea, that transparent dome covering the front of your eye, provides about two-thirds of your eye's total refractive power—approximately 43 diopters out of the eye's total 58. It's fixed-focus, shaped by the mechanical properties of collagen fibers arranged in precise orthogonal layers. The cornea is avascular—it contains no blood vessels—because any vasculature would scatter light and degrade image quality. Instead, oxygen diffuses in from the tear film coating the surface, and nutrients arrive from the aqueous humor behind it. This is why you shouldn't sleep in contact lenses—you're suffocating your cornea.

[VIEW IMAGES: Corneal structure showing collagen fiber organization]

The crystalline lens provides the remaining 15 diopters and, crucially, is adjustable. The lens is suspended by zonule fibers attached to ciliary muscles. When you look at distant objects, the ciliary muscles relax, the zonules pull tight, and the lens flattens. When you focus on nearby objects—accommodation—the ciliary muscles contract, releasing tension on the zonules, and the lens's natural elasticity makes it bulge into a more spherical shape. This automatic refocusing happens 100,000 times per day without conscious thought. With age, the lens loses elasticity—presbyopia—which is why people over 45 need reading glasses. The lens can't bulge enough to focus on near objects anymore, a mechanical failure of the protein scaffolding inside lens cells.

[VIEW IMAGES: Lens accommodation mechanism showing near vs far focus]

Light travels through the transparent vitreous humor filling the eye's interior and finally strikes the retina. But here's something remarkable: the retina is installed backward. Photoreceptors point away from incoming light, toward the back of the eye where the retinal pigment epithelium recycles photopigments and absorbs stray photons. Light must pass through several layers of neurons, blood vessels, and support cells before reaching the photoreceptors. This seemingly poor design actually reflects a critical metabolic constraint—photoreceptors have the highest energy consumption per unit mass of any tissue in the body, demanding intimate contact with the blood supply of the choroid behind them.

[VIEW IMAGES: Inverted retinal structure showing backward photoreceptor orientation]

[READ: Anatomy and Physiology of the Human Eye - detailed structural review]

Visual Fields and Clinical Diagnosis

The visual field for a single eye extends approximately 60 degrees nasally, 90 degrees temporally, 60 degrees superiorly, and 75 degrees inferiorly—an asymmetric coverage limited by your nose, brow, and cheekbones. The binocular field, where the visual fields of both eyes overlap, covers about 120 degrees horizontally. This overlap is essential for stereoscopic depth perception, but it's a luxury—many prey animals sacrifice binocular overlap for 360-degree coverage, a different optimization for a different survival problem.

[VIEW IMAGES: Visual field maps showing binocular and monocular regions]

Neurologists can localize brain lesions with stunning precision just by mapping visual field deficits. A scotoma, a blind region in the visual field, tells a story about where in the visual pathway damage occurred. Damage to one optic nerve causes monocular blindness in that eye only. Damage at the optic chiasm, where fibers from the nasal retina of each eye cross, produces bitemporal hemianopia—loss of both temporal fields—because those are mapped by the nasal retinas. Damage to the optic tract or visual cortex on one side produces homonymous hemianopia—loss of the same half of the visual field in both eyes. The visual system's precise anatomical organization turns field deficits into diagnostic gold.

[VIEW IMAGES: Visual field defects and lesion localization diagrams]

[READ: The Visual Pathways: Anatomy and Function - clinical correlation]

Retinal Computation: The First Ten Layers

Photoreceptors: Rods and Cones

[VIEW IMAGES: Rod and cone photoreceptor structure showing outer segments and cellular organization]

The human retina contains approximately 120 million rods and 6 million cones, two photoreceptor types optimized for different light conditions. Rods mediate scotopic (dim light) vision, are exquisitely sensitive—capable of detecting single photons—but provide no color information and have low spatial resolution. Cones mediate photopic (bright light) vision, require higher light levels, come in three types tuned to different wavelengths (giving us trichromatic color vision), and provide high spatial acuity. The fovea contains only cones packed at maximum density. The periphery is dominated by rods—your night vision comes from the periphery, which is why astronomers use averted gaze to see dim stars.

[VIEW IMAGES: Microscopic images of rod and cone photoreceptors]

[READ: Rods and Cones: Structure and Function - photoreceptor physiology]

Each photoreceptor is a metabolic powerhouse wrapped around a signaling system of elegant simplicity. The outer segment contains hundreds of membranous discs, each studded with millions of opsin molecules—rhodopsin in rods, photopsins in cones. When a photon strikes an opsin, it photoisomerizes the retinal chromophore from 11-cis to all-trans configuration in under a picosecond. This conformational change activates the opsin, which activates transducin, a G-protein, which activates phosphodiesterase, which hydrolyzes cGMP. Here's the twist: in darkness, cGMP holds open cyclic nucleotide-gated channels, allowing sodium and calcium to flow in, keeping the photoreceptor depolarized. Light closes these channels by destroying cGMP, causing hyperpolarization. Light doesn't excite photoreceptors—it silences them.

[VIEW IMAGES: Rhodopsin photoisomerization and retinal chromophore configuration]

[VIEW IMAGES: Phototransduction cascade showing how light leads to photoreceptor hyperpolarization]

This inverted logic—light causes hyperpolarization instead of depolarization—is actually a brilliant solution to the signal-to-noise problem. In darkness, photoreceptors continuously release glutamate at their ribbon synapses. Light reduces this release. The signaling range is determined by the dark release rate, which can be very high, giving a large dynamic range. The cascade also provides massive amplification: one photon isomerizes one rhodopsin, which activates hundreds of transducins, which activate phosphodiesterases that hydrolyze thousands of cGMP molecules, closing hundreds of channels. Single photons become detectable signals through this molecular amplification.

Retinal Circuits: Compression and Computation

The retina contains five major neuron types arranged in three cellular layers with two synaptic layers between them. Photoreceptors occupy the outermost nuclear layer. They synapse onto bipolar cells in the outer plexiform layer. Bipolar cells, in turn, synapse onto ganglion cells in the inner plexiform layer. Horizontal cells and amacrine cells provide lateral connections that create the center-surround receptive fields that are the retina's fundamental computational output.

[VIEW IMAGES: Retinal layer organization showing cellular architecture]

Bipolar cells come in two flavors: ON-bipolars that depolarize when light hits their receptive field center, and OFF-bipolars that hyperpolarize to the same stimulus. This split happens because the two types express different glutamate receptors. Remember, photoreceptors release less glutamate in light. ON-bipolars have metabotropic receptors (mGluR6) that inhibit them, so less glutamate disinhibits them—light turns them on. OFF-bipolars have ionotropic AMPA/kainate receptors that excite them, so less glutamate shuts them off—light turns them off. A single photoreceptor drives both pathways simultaneously, creating parallel representations of light increments and decrements.

[VIEW IMAGES: ON and OFF bipolar cell pathways and receptive field organization]

Horizontal cells, laterally interconnected neurons in the outer plexiform layer, receive input from photoreceptors and feed back onto them and onto bipolar cells. This creates center-surround antagonism: light in the receptive field center excites (or inhibits) the bipolar cell, while light in the surround has the opposite effect. This organization makes retinal neurons respond most strongly to edges and contrast rather than uniform illumination. A uniformly lit field produces little response because center and surround cancel. An edge, with light on one side and dark on the other, produces maximal response. The retina throws away information about absolute light levels and encodes spatial change—edges are where information lives.

[VIEW IMAGES: Center-surround receptive fields and edge detection in retina]

Amacrine cells, the most diverse retinal neuron class with over 30 types, operate in the inner plexiform layer between bipolar and ganglion cells. They refine signals, detect motion, compute direction selectivity, and implement temporal filtering. Some amacrine cells use unconventional neurotransmitters—dopamine, acetylcholine, peptides—to modulate retinal processing based on circadian rhythms and adaptive state. The retina isn't a passive camera but an adaptive processor that changes its operating mode based on context.

Ganglion Cells: The Output Neurons

[VIEW IMAGES: M and P ganglion cell pathways showing different response properties]

Retinal ganglion cells are the sole output of the retina, sending action potentials down the optic nerve to the brain. The two major classes, M (magnocellular) and P (parvocellular) ganglion cells, create parallel information streams with complementary specializations. P-cells, constituting about 80% of ganglion cells, have small receptive fields, slow conduction velocities, sustained responses, and color-opponent properties—they're optimized for high-resolution form and color. M-cells, about 10% of ganglion cells, have large receptive fields, fast conduction velocities, transient responses, and high contrast sensitivity—they're optimized for motion and change detection.

This segregation reflects a fundamental constraint: you can't optimize for both high spatial resolution and high temporal resolution simultaneously. High resolution requires small receptive fields and sustained integration, which sacrifices speed. High temporal resolution requires large receptive fields and transient responses, which sacrifices spatial precision. The visual system solves this by building both analyzers in parallel, then keeping them separate all the way to cortex. M-cells connect to magnocellular layers of the lateral geniculate nucleus (LGN), which connect to cortical layer 4Cα, which feeds the dorsal stream. P-cells connect to parvocellular LGN layers, which connect to cortical layer 4Cβ, which feeds the ventral stream. The segregation that starts in the retina persists throughout the visual system.

The convergence ratio differs dramatically across the retina. In the fovea, some cones connect to a single midget bipolar cell, which connects to a single P-cell—a direct line to the brain preserving maximum resolution. In the periphery, hundreds of rods converge onto a single bipolar cell, which converges with other bipolar cells onto a single M-cell. This pooling increases sensitivity—necessary for dim-light vision—but destroys spatial resolution. The same peripheral M-cell that can detect a single photon cannot tell you where in its large receptive field that photon arrived. The retina implements different computational strategies in parallel, optimizing for different ecological demands.

From Eye to Brain: The Visual Pathway

Optic Nerve, Chiasm, and Tract

Ganglion cell axons exit the eye at the optic disc—your blind spot—and form the optic nerve. This is a misnomer: the optic nerve is actually a brain tract, myelinated by oligodendrocytes rather than Schwann cells, a cable of white matter extending from each eye toward the brain. At the optic chiasm, sitting just below the hypothalamus above the pituitary, roughly half the fibers cross to the opposite side. Specifically, fibers from the nasal retina cross, while fibers from the temporal retina remain ipsilateral. Because of the inverted image, nasal retina sees temporal visual field and vice versa. The crossing ensures that all information from the right visual field (from both eyes) ends up in the left hemisphere, and left visual field information reaches the right hemisphere.

[VIEW IMAGES: Optic chiasm showing partial decussation and visual field mapping]

This partial decussation is an evolutionary solution to binocular vision. Fish, with eyes on opposite sides of their heads, have complete crossing—each eye's entire output goes to the opposite hemisphere. Primates, with front-facing eyes and overlapping visual fields, need both eyes' information about the same region of space to reach the same hemisphere for stereoscopic processing. The architecture of the chiasm directly reflects the overlap of the visual fields—larger overlap means smaller fraction crossing.

Lateral Geniculate Nucleus: The Thalamic Gateway

[VIEW IMAGES: Lateral geniculate nucleus showing six-layered structure and M/P segregation]

The lateral geniculate nucleus, a layered structure in the thalamus, is the first central station for visual processing. It contains six distinct layers in primates, numbered 1-6 from ventral to dorsal. Layers 1 and 2, containing large cells, are the magnocellular layers receiving M-cell input. Layers 3-6, containing small cells, are the parvocellular layers receiving P-cell input. The M and P streams remain segregated, never mixing within the LGN. Moreover, each layer receives input from only one eye: layers 1, 4, and 6 from the contralateral eye; layers 2, 3, and 5 from the ipsilateral eye. This precise lamination keeps information from the two eyes separate until binocular integration happens in cortex.

For decades, the LGN was thought to be a passive relay, simply transmitting retinal signals to cortex. This was wrong. LGN neurons have complex response properties, receive massive feedback from cortex (ten times more synapses from cortex than from retina), and are modulated by attention, arousal, and behavioral state. The LGN is a gate, not a relay—it controls which retinal signals reach consciousness based on top-down expectations and task demands. When you pay attention to something, cortex actively enhances LGN responses to that location, amplifying the signal before it even reaches conscious processing. Your brain is reaching back down to the thalamus to turn up the volume on inputs that matter.

[VIEW IMAGES: LGN feedback pathways and attentional modulation]

[READ: Functional Organization of the Visual Cortex - from LGN to cortex]

Primary Visual Cortex: Constructing Orientation

[VIEW IMAGES: Hubel and Wiesel's discoveries of orientation-selective neurons in V1]

David Hubel and Torsten Wiesel's recordings in cat and monkey visual cortex in the 1960s revolutionized our understanding of sensory processing. They discovered that V1 neurons don't respond to spots of light like retinal or LGN neurons—they respond to oriented edges. A V1 simple cell has an elongated receptive field with ON and OFF subregions arranged side by side. It responds maximally to a bar or edge at a specific orientation and position. Rotate the stimulus 90 degrees, and the neuron falls silent. This orientation selectivity is constructed from LGN inputs: simple cells receive convergent input from several LGN neurons whose center-surround receptive fields are aligned in a row. The geometry of the wiring creates the feature selectivity.

Complex cells, also orientation-selective, show position invariance within their receptive fields—they respond to their preferred orientation anywhere within a larger region. They likely receive input from multiple simple cells with the same orientation preference but different positions. This creates a hierarchy: circular center-surround → oriented simple cell → position-invariant complex cell. Each stage extracts progressively more abstract features while discarding irrelevant details.

V1 is organized into orientation columns—vertical slabs of cortex where all neurons share the same orientation preference. As you move tangentially across the cortical surface, preferred orientation rotates smoothly, cycling through 180 degrees every millimeter or so. V1 also contains ocular dominance columns, alternating stripes where neurons prefer input from one eye or the other. The intersection of orientation and ocular dominance creates a two-dimensional map where every combination of orientation and eye preference has a dedicated cortical representation. This columnar organization, revealed by optical imaging and anatomical tracers, shows that cortex is not randomly wired but highly structured to represent stimulus feature space efficiently.

[VIEW IMAGES: Orientation and ocular dominance columns in V1 revealed by optical imaging]

V1 exhibits extreme cortical magnification—disproportionate allocation of cortical area to central vision. The fovea, representing 1% of the visual field, claims 50% of V1. This overrepresentation is why you can read text: the tiny foveal image requires massive parallel processing to extract the fine spatial detail that letters demand. Cortical magnification means that a 1-degree stimulus in the fovea activates 100 times more cortex than the same stimulus in the far periphery. Your visual experience of a uniform, detailed world is an illusion—you have an island of high resolution surrounded by a sea of vague, low-resolution vision that you never notice because you move your fovea to anything interesting.

[VIEW IMAGES: Cortical magnification showing disproportionate foveal representation in V1]

Higher Visual Areas: What and Where

The Ventral Stream: Object Recognition

[VIEW IMAGES: Ventral "what" and dorsal "where" streams in visual processing]

Beyond V1, visual processing splits into two great streams: the ventral stream projecting into temporal cortex, and the dorsal stream projecting into parietal cortex. The ventral stream, often called the "what" pathway, specializes in object recognition, color, and fine form. It starts with V1 and V2, continues through V4 (critical for color processing), and culminates in inferotemporal cortex (IT), where neurons respond to complex objects—faces, hands, bodies—with remarkable selectivity and invariance. A face-selective IT neuron might respond to a face regardless of size, position, lighting, or viewing angle. This is invariant object recognition, the ability to identify the same object across transformations, implemented through hierarchical feature extraction.

[VIEW IMAGES: Ventral stream anatomy and object recognition processing]

The organization of IT cortex is not random. The fusiform face area (FFA) responds preferentially to faces. The parahippocampal place area (PPA) responds to scenes and spaces. The extrastriate body area (EBA) responds to body parts. This category-selective organization suggests that evolution has tuned cortical circuits to recognize ecologically important object categories. Face recognition is so important for social primates that we dedicate specialized hardware to it. Damage to FFA causes prosopagnosia—face blindness—where patients can see faces perfectly but cannot recognize them, sometimes failing to recognize even their own reflection.

[VIEW IMAGES: Fusiform face area and face-selective neural responses]

[SEARCH: "patient D.F. visual form agnosia" - see dissociation between perception and action]

The Dorsal Stream: Space and Action

The dorsal stream, projecting from V1 through V2, V3, and MT (middle temporal area) into parietal cortex, specializes in spatial vision, motion processing, and visually guided action. Area MT, also called V5, is the motion center of the visual system. MT neurons are direction-selective, responding to motion in a preferred direction and speed. They integrate local motion signals from V1 to compute global motion patterns—solving the aperture problem where local motion is ambiguous but global motion is clear. Damage to MT produces akinetopsia, motion blindness, a rare condition where patients see the world as a series of static snapshots. One patient described pouring tea as impossible—the stream appeared frozen, then the cup was suddenly overflowing.

[VIEW IMAGES: MT/V5 area showing motion-selective neural responses]

[SEARCH: "akinetopsia motion blindness" - see cases of selective motion perception deficits]

Parietal cortex, the dorsal stream's terminus, contains multiple specialized areas. The lateral intraparietal area (LIP) encodes spatial attention and saccade planning—its neurons respond to locations where attention is directed or where eye movements are planned, creating a priority map of visual space. The anterior intraparietal area (AIP) processes object affordances—how graspable things are—and guides hand shaping during reaching. The dorsal stream is intimately connected to motor systems, implementing sensorimotor transformations that convert visual information into action coordinates.

[VIEW IMAGES: Dorsal stream and parietal areas for visuomotor control]

The what/where distinction isn't absolute—the streams interact extensively through interconnections. But the dissociation is real and has profound implications. Conscious visual perception, the vivid sense of seeing, is predominantly a ventral stream phenomenon. The dorsal stream operates largely unconsciously, guiding action without awareness. This is why you can catch a ball without consciously computing its trajectory, why skilled athletes respond "without thinking," and why your hand knows how to reach for your coffee cup even when your mind is elsewhere. The two systems see different things in different ways for different purposes.

Color Vision: Three Receptors, Infinite Hues

Trichromatic Theory and Opponent Processing

[VIEW IMAGES: Spectral sensitivity curves of the three cone types]

Human color vision is based on three cone types with overlapping spectral sensitivities. S-cones peak around 420 nm (blue), M-cones around 530 nm (green), and L-cones around 560 nm (red). These aren't pure color detectors—each responds to a broad range of wavelengths. The visual system determines color by comparing the responses of the three cone types. A 580 nm light stimulates M-cones and L-cones but not S-cones; the ratio of M to L response encodes "yellow." This is trichromacy, the principle that three receptor types suffice to create the entire perceptual color space because any wavelength produces a unique pattern of responses across the three cone types.

[VIEW IMAGES: Spectral sensitivity curves of the three cone types]

[READ: Neural Mechanisms of Color Vision - from cones to perception]

But raw trichromatic signals aren't what reaches consciousness. Retinal circuits transform cone outputs into opponent signals: L-M (red-green), S-(L+M) (blue-yellow), and L+M (luminance). Color-opponent neurons in the retina and LGN have center-surround receptive fields where center and surround prefer different wavelengths—for example, L-ON/M-OFF in the center, M-ON/L-OFF in the surround. These neurons signal color contrast, not absolute color. They respond maximally at red-green borders and fall silent for uniform color fields. Like spatial vision, color vision is about edges and differences, not absolute values.

[VIEW IMAGES: Opponent process theory and color-opponent neurons]

Color constancy, the fact that a red apple looks red under both sunlight and tungsten illumination despite different wavelengths reaching the eye, emerges from these opponent mechanisms. The brain doesn't care about absolute cone responses but about ratios and contrasts across space. Surfaces surrounded by the same illumination maintain constant ratios even when absolute values change. This is why you can only see color distortions when you compare different illuminations side by side—your brain normalizes everything within a scene based on contextual cues.

[VIEW IMAGES: Color constancy demonstrations and illusions]

Color Blindness and Tetrachromacy

The genes for L and M opsins lie on the X chromosome, while the S opsin gene is autosomal. This means color blindness affecting red-green discrimination is X-linked and more common in males (8%) than females (0.5%). Protanopia (missing L-cones) and deuteranopia (missing M-cones) produce similar red-green confusion because L and M are so spectrally close. True tetrachromats—people with four functioning cone types—may exist in the female population due to X-chromosome heterozygosity, potentially seeing color dimensions invisible to normal trichromats. We can barely imagine their perceptual world, just as dichromats cannot imagine ours.

[VIEW IMAGES: Color blindness types and Ishihara test plates]

[VIEW IMAGES: Tetrachromacy and extended color vision]

Predictive Vision: Filling In and Leaving Out

The Blind Spot and Perceptual Completion

[VIEW IMAGES: Blind spot demonstrations and perceptual filling-in phenomena]

Your blind spot is not experienced as a dark hole or a blur—it's filled in with whatever matches the surroundings. If you stare at a uniform background, the blind spot region appears uniform. If you place the blind spot over a pattern, your brain continues the pattern across the gap. This is not the retina filling in—there's no retina there—but cortical completion, where V1 and higher areas hallucinate visual content based on surrounding context. The brain assumes the world is continuous and smooth unless evidence suggests otherwise.

This completion is so automatic and convincing that you genuinely see the filled-in content. When researchers use clever stimuli to put entirely novel content in the blind spot, subjects report seeing it, and their V1 shows activity corresponding to the hallucinated features. The brain doesn't tolerate gaps in perception—it would rather make something up than admit ignorance. This strategy usually works because the world is locally smooth, and predicting the blind spot content from surroundings is usually correct. But it means your conscious visual experience includes hallucinated content you can't distinguish from sensory input.

Change Blindness and Inattentional Blindness

Change blindness reveals another uncomfortable truth: you notice far less than you think. If a change to a visual scene occurs during an eye movement, a blink, or a brief visual disruption, even enormous changes go unnoticed. A person you're talking to can be swapped for someone completely different behind an obstruction, and you'll continue the conversation without noticing. This isn't stupidity—it's the fundamental architecture of attention. The brain doesn't maintain a detailed representation of the entire visual scene. It constructs vivid representations of attended locations on demand while leaving unattended regions poorly represented. You feel like you see everything because you can look anywhere, but you're not storing what you saw when you looked away.

Inattentional blindness takes this further. The famous invisible gorilla experiment asked subjects to count basketball passes. While they counted, a person in a gorilla suit walked through the scene, clearly visible. Half of subjects never saw it. They weren't looking for gorillas; gorillas were therefore invisible. Attention is a spotlight that illuminates a small region of visual space while leaving the rest in darkness. What you don't attend to might as well not exist—it produces neural responses in early visual areas, but those signals don't reach awareness without attention amplifying them.

This has staggering implications. Eyewitness testimony is unreliable not because memory is bad (though it is) but because witnesses never saw half of what they claim to remember. Radiologists miss tumors on chest X-rays because attention was directed elsewhere. Drivers in accidents claim the pedestrian appeared from nowhere because inattention rendered the pedestrian invisible despite being in plain sight. Vision is not a video camera recording everything. It's a limited-capacity inference engine that constructs a sparse representation of behaviorally relevant features while ignoring the rest.

Vision as Controlled Hallucination

Modern theories frame perception as controlled hallucination—the brain generates predictions about what it expects to see, compares these predictions to sensory input, and updates its predictions based on prediction errors. This predictive coding framework explains a range of phenomena: why familiar objects are easier to see, why context influences perception, why ambiguous figures flip between interpretations, and why hallucinations are indistinguishable from perception when prediction errors are suppressed.

Every moment, your brain is predicting the visual input it expects to receive and comparing those predictions against actual retinal input. Only the differences—prediction errors—propagate up the hierarchy to update the model. Most of what you see is predicted, not sensed. This is efficient: transmission of prediction errors requires far less bandwidth than raw sensory data. But it means your conscious perception is a mixture of bottom-up sensory evidence and top-down predictions, blended so seamlessly that you cannot distinguish which is which.

Vision, then, is not about accurately representing the world but about constructing a useful model that supports action and survival. The model is constrained by sensory evidence but not determined by it. Your brain is hallucinating your visual world into existence every moment, using retinal input merely to guide and constrain the hallucination. The question isn't whether perception is accurate—it's whether it's adaptive. Fitness beats fidelity.

Clinical Perspectives and Disorders

Retinal Pathologies

Retinitis pigmentosa, a group of genetic disorders affecting photoreceptors, typically begins with rod degeneration. Patients first lose night vision and peripheral vision—tunnel vision—as rods die. Eventually, cones degenerate too, leading to blindness. Age-related macular degeneration (AMD), the leading cause of blindness in developed countries, destroys the fovea while leaving peripheral vision intact. Patients can navigate rooms but cannot read or recognize faces—the opposite loss pattern from retinitis pigmentosa. Diabetic retinopathy damages retinal blood vessels, causing hemorrhages and edema that destroy local patches of retina, producing irregular scotomas. Each pathology reveals the retina's organization through the pattern of vision loss.

[VIEW IMAGES: Retinal pathologies and their characteristic vision loss patterns]

[SEARCH: "visual disorders treatment 2024" - see latest advances in treating vision loss]

Cortical Blindness and Visual Agnosias

Bilateral V1 damage causes cortical blindness—patients claim to see nothing and behave as if blind, yet may show blindsight, responding to visual stimuli they deny seeing. Residual pathways through superior colliculus and pulvinar bypass the damaged V1, allowing unconscious visual processing to guide behavior without awareness. Ventral stream lesions cause visual agnosias—patients can see but cannot recognize. Object agnosia (difficulty recognizing objects), prosopagnosia (difficulty recognizing faces), and color agnosia (difficulty recognizing colors despite intact color discrimination) fractionate the "what" pathway into dissociable components. Dorsal stream lesions cause optic ataxia (difficulty reaching under visual guidance) and spatial neglect (failure to attend to one side of space). These dissociations validate the anatomical segregation revealed by physiology.

[VIEW IMAGES: Cortical blindness and blindsight phenomenon]

[VIEW IMAGES: Visual agnosias and dorsal stream deficits]

Closing: The Invention of Light

You are not seeing the electromagnetic radiation between 400 and 700 nanometers that reaches your retina. You are seeing a model—a useful fiction constructed by billions of neurons applying 600 million years of evolutionary priors about what visual input usually means. Your brain's assumptions about the world—that surfaces are smooth, that lighting is consistent, that objects persist, that edges matter more than uniform regions—are so deeply embedded in visual processing that you cannot see without them. When these assumptions are violated, you get illusions. When they're correct, you get perception. The difference isn't in your consciousness—it's in the world.

The marvel is not that vision is sometimes wrong. The marvel is that a 3-pound biological computer, consuming 20 watts, can construct a dynamic three-dimensional model of the world from two 2D projections, update it 60 times per second, maintain stable perception despite constant eye movements, fill in missing information, adjust for changing lighting, segment objects from backgrounds, recognize faces in milliseconds, guide actions with millimeter precision, and do all this so effortlessly that you never notice it's happening. Vision is not a window on reality. It's reality's most beautiful lie, and you believe it utterly.

[SEARCH: "vision neuroscience 2024" - explore latest discoveries in visual processing]

[READ: Latest vision research news from ScienceDaily]

Thought Questions for Discussion

The Resolution Illusion: You have the subjective experience of seeing the entire visual world in sharp, colorful detail. But the physiology shows that only your fovea (2 degrees) has high acuity and full color vision, while the remaining 95% of your visual field is progressively lower resolution and color-blind. How does the brain create the illusion of uniform high-resolution vision when the underlying representation is so non-uniform? What evolutionary advantage might this illusion provide, and what vulnerabilities does it create? Consider how this relates to attention, eye movements, and working memory.

Parallel Streams and Consciousness: The ventral and dorsal streams process different aspects of vision (what vs. where/how), and only ventral stream processing reliably reaches consciousness. Patient D.F. could guide actions with vision she couldn't consciously perceive. What does this dissociation tell us about the relationship between vision and consciousness? If the dorsal stream implements sophisticated visual processing without awareness, what determines which neural processes enter consciousness? How might this inform debates about unconscious perception, subliminal processing, and the hard problem of consciousness?

Controlled Hallucination: Modern theories propose that perception is controlled hallucination—the brain generates predictions and uses sensory input merely to constrain and update them. This explains why your blind spot is filled in, why change blindness occurs, and why context influences perception. But if perception is predominantly prediction rather than sensation, what distinguishes perception from hallucination? Are psychiatric hallucinations fundamentally different from normal perception, or just prediction errors that fail to be corrected? What are the implications for concepts like "objective reality" if all perception is constructed rather than received?

Practice Questions:

• The fovea contains only _______ photoreceptors and represents approximately _______% of the visual field but claims _______% of V1.

• Photoreceptors _______ (depolarize/hyperpolarize) in response to light because light destroys _______, which normally holds _______ channels open.

• The retina contains _______ million rods and _______ million cones, with rods mediating _______ vision and cones mediating _______ vision.

• ON-bipolar cells use _______ glutamate receptors that _______ them, while OFF-bipolar cells use _______ receptors that _______ them.

• Retinal ganglion cells create _______ and _______ pathways, with the first optimized for _______ and the second for _______.

• At the optic chiasm, fibers from the _______ retina cross to the opposite hemisphere, ensuring that the _______ visual field is represented in the _______ hemisphere.

• The lateral geniculate nucleus contains _______ layers, with layers 1-2 being _______ and layers 3-6 being _______.

• V1 simple cells respond to _______ at specific _______, while complex cells show _______ invariance within their receptive fields.

• The ventral stream, projecting into _______ cortex, specializes in _______ and _______, while the dorsal stream projects into _______ cortex for _______ and _______.

• Color vision is based on _______ cone types with peaks at _______ nm (S), _______ nm (M), and _______ nm (L).

📝 View Answer Key for Chapter 7