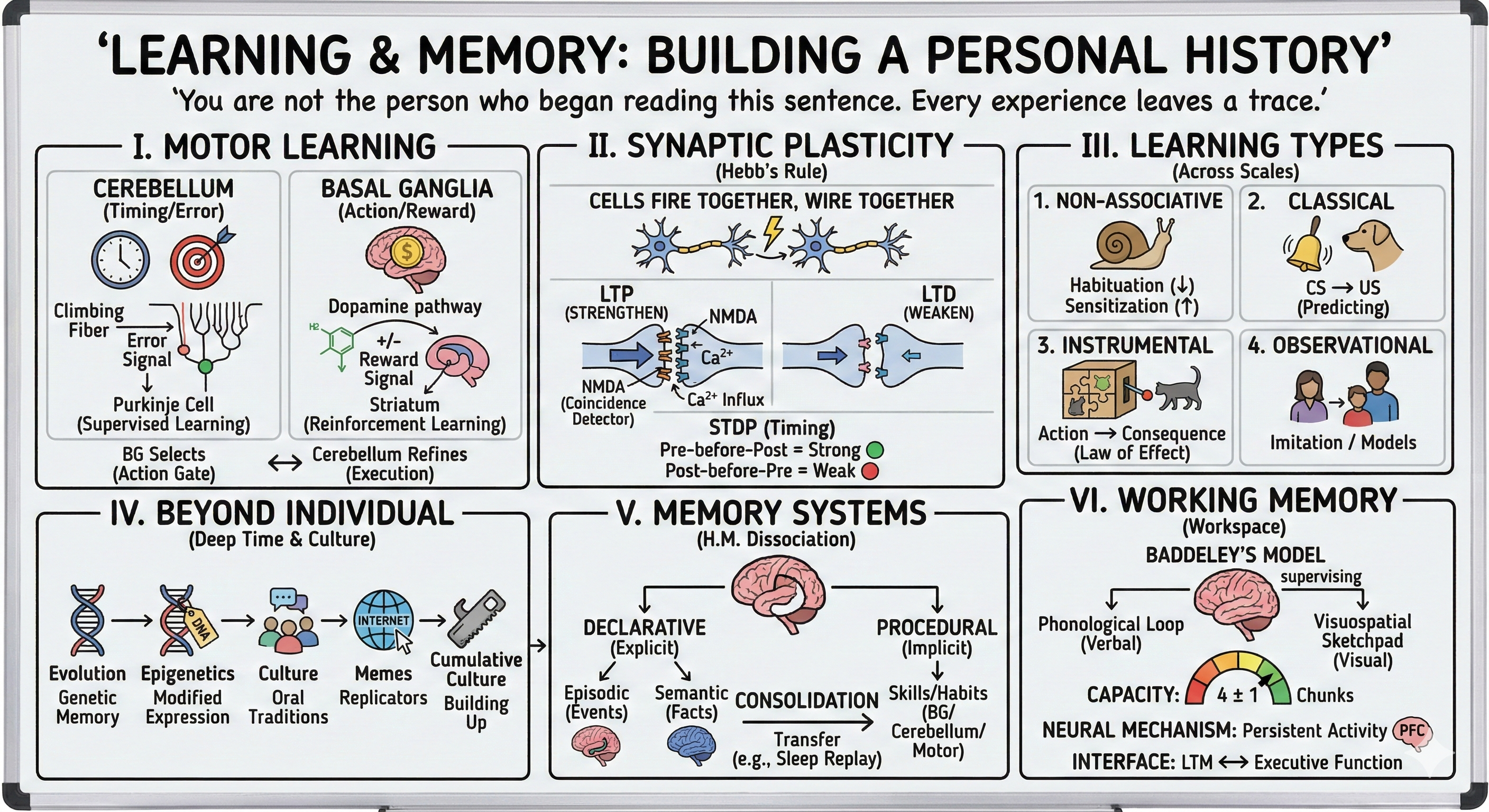

Learning and Memory: Building a Personal History

• Cerebellum: error correction and forward models

• Basal ganglia: action selection and reinforcement learning

• Complementary roles in skill acquisition

II. Synaptic Plasticity: The Molecular Basis (12 min)

• Hebbian learning: cells that fire together wire together

• Long-term potentiation (LTP) and depression (LTD)

• NMDA receptors as coincidence detectors

• Spike-timing-dependent plasticity (STDP)

III. Learning Across Scales and Types (12 min)

• Non-associative: habituation and sensitization

• Classical conditioning: Pavlov to prediction error

• Instrumental learning: actions and consequences

• Observational and cognitive learning

IV. Learning Beyond the Individual: Deep Time and Culture (12 min)

• Evolution as genetic memory: archetypes and instincts

• Lamarck's revenge: epigenetic inheritance

• Cultural memory: language, memes, and gossip

• Cumulative cultural evolution as collective learning

V. Memory Systems: Multiple Forms of Storage (18 min)

• Declarative vs procedural: H.M. and double dissociation

• Episodic memory: the hippocampal time machine

• Semantic memory: conceptual knowledge networks

• Consolidation: from hippocampus to cortex

VI. Working Memory: The Workspace of Thought (14 min)

• Baddeley's model and capacity limits (4±1 chunks)

• Prefrontal cortex: persistent activity and maintenance

• Interface to executive function (preview next lecture)

You are not the person who began reading this sentence. In the microseconds between the first word and the last, your synapses changed—some strengthened, some weakened, patterns shifted. Every experience leaves a trace; every thought rewires the architecture. Learning is the brain's defining feature—the ability to be modified by its own activity. Memory is learning's shadow, the persistence of those modifications across time. Without learning and memory, you would wake each morning as a newborn, unable to recognize faces, speak language, or understand the symbols on this page. Today we explore how neural circuits transform experience into lasting change, from molecular modifications at single synapses to large-scale reorganization of memory systems that create your continuous sense of self across decades. We'll trace learning from its simplest forms—reflexes growing stronger or weaker—through skill acquisition, habit formation, and finally to the extraordinary systems that let you mentally travel through time, re-experiencing past events or imagining futures that haven't happened yet.

Bridge from Motor Control: The Learning Systems

Cerebellum: The Precision Timing Machine

[VIEW IMAGES: Cerebellar anatomy showing distinctive layered architecture]

The cerebellum (Latin: "little brain") contains more neurons than the rest of the brain combined—80 billion neurons packed into 10% of brain volume. Its structure is remarkably regular and repetitive, suggesting a stereotyped computation performed millions of times in parallel. The cerebellum receives input from virtually every sensory system and motor area, yet lesions don't paralyze you—they make your movements uncoordinated, mistimed, and inaccurate. Patients with cerebellar damage show ataxia (uncoordinated movement), dysmetria (overshooting or undershooting targets), and intention tremor (trembling during purposeful movements). These symptoms reveal the cerebellum's computational role: it doesn't initiate movement but refines it, correcting errors and learning to anticipate disturbances.

The cerebellum implements supervised learning—it compares intended movements with actual outcomes, using error signals to adjust future commands. David Marr and James Albus independently proposed models in which climbing fibers (from the inferior olive) carry error signals that modify the synapses between parallel fibers and Purkinje cells—the sole output neurons of the cerebellar cortex. When a movement error occurs, climbing fibers fire, causing long-term depression (LTD) at recently active parallel fiber synapses. Over repeated trials, the pattern of parallel fiber activity associated with errors gets systematically weakened, reducing future errors. This is how you learn to catch a ball, type without looking, or play a musical instrument—cerebellar circuits refine motor commands until predictions match outcomes.

[VIEW IMAGES: Cerebellar learning in eyeblink conditioning paradigm]

The cerebellum's role extends beyond motor control. It's involved in cognitive timing, working memory, attention, and even emotion regulation. Recent theories suggest the cerebellum builds forward models not just of physical movements but of cognitive operations—predicting the sensory or cognitive consequences of mental actions. When you mentally rehearse a speech or imagine a chess move, your cerebellum may be simulating the expected outcomes. This unifying view—the cerebellum as a universal learning machine for prediction—explains its ubiquitous connections and mysterious involvement in autism, dyslexia, and schizophrenia.

Basal Ganglia: The Action Selection Committee

[VIEW IMAGES: Basal ganglia circuit showing direct and indirect pathways]

The basal ganglia—a set of subcortical nuclei including the striatum, globus pallidus, substantia nigra, and subthalamic nucleus—solve a different problem: action selection. At any moment, countless potential actions compete for execution. The basal ganglia implement a gating mechanism that selects which action to perform and suppresses alternatives. Damage to different basal ganglia components produces strikingly different motor disorders. Parkinson's disease, caused by death of dopamine neurons in the substantia nigra, produces akinesia (difficulty initiating movements), bradykinesia (slowness), and rigidity. Huntington's disease, caused by degeneration of striatal neurons, produces chorea (involuntary, dance-like movements). Too little basal ganglia output—movements get stuck. Too much—movements escape control.

The basal ganglia learn through reinforcement learning—trial-and-error discovery of which actions lead to rewards. Dopamine neurons signal prediction errors—the difference between expected and received reward. When an unexpected reward arrives, dopamine neurons burst, strengthening the synapses that led to the action (positive reinforcement). When an expected reward fails to appear, dopamine neurons pause, weakening those synapses (negative reinforcement). This is precisely the algorithm used in artificial intelligence for reinforcement learning. The basal ganglia implement it in biological hardware, learning action values through experience.

[VIEW IMAGES: Dopamine neuron responses showing prediction error signals]

The complementary roles of cerebellum and basal ganglia illuminate different learning strategies. The cerebellum uses supervised learning with explicit error signals—you know when you've missed the target. The basal ganglia use reinforcement learning with reward signals—you know when outcomes are better or worse than expected, even without knowing the "correct" action. Together they enable skill acquisition: basal ganglia select which actions to attempt (exploration and exploitation), cerebellum refines execution (precision and timing). As skills become automatic, control shifts from cortex (slow, effortful) to basal ganglia and cerebellum (fast, automatic). This is why you can drive while conversing—the sensorimotor loops run subcortically, freeing cortex for conversation.

Synaptic Plasticity: Memory at the Molecular Level

Hebb's Postulate: A Rule for Learning

[VIEW IMAGES: Donald Hebb and illustrations of his cell assembly theory]

In 1949, Canadian psychologist Donald Hebb proposed a simple learning rule that would revolutionize neuroscience: "When an axon of cell A is near enough to excite cell B and repeatedly or persistently takes part in firing it, some growth process or metabolic change takes place in one or both cells such that A's efficiency, as one of the cells firing B, is increased." This is colloquially summarized as "cells that fire together, wire together." Hebb's postulate provided a mechanistic account of associative learning—if two neurons are consistently co-active, their connection strengthens. This implements correlation detection at the synaptic level, allowing networks to learn statistical regularities in their inputs.

Hebb's rule has three key features: locality (changes occur at active synapses, not globally), cooperativity (presynaptic activity alone is insufficient; the postsynaptic cell must also be active), and specificity (only synapses participating in the co-activity are modified). These properties ensure learning is targeted and efficient. For decades, Hebb's postulate was theoretical. Then, in 1973, Terje Lømo and Tim Bliss discovered its molecular implementation in the hippocampus.

Long-Term Potentiation: The Hebbian Mechanism

[VIEW IMAGES: LTP induction showing NMDA receptor activation and signaling cascades]

Long-term potentiation (LTP) is a lasting increase in synaptic strength following high-frequency stimulation of a pathway. Stimulate a hippocampal pathway with a brief burst of high-frequency pulses (a tetanus), and hours or days later, test stimuli produce larger EPSPs than before—the synapse has been potentiated. LTP exhibits Hebb's properties: it's input-specific (only stimulated synapses are potentiated), associative (weak inputs are potentiated if they coincide with strong inputs), and cooperative (weak stimulation fails to induce LTP; strong stimulation succeeds). This form of LTP is the most compelling molecular implementation of Hebb's rule yet discovered.

The mechanism involves the NMDA receptor, a glutamate receptor with unique properties. It's a coincidence detector—it opens only when two conditions are simultaneously met: glutamate binds (presynaptic activity) AND the postsynaptic membrane is depolarized (postsynaptic activity). Under resting conditions, the NMDA receptor channel is blocked by magnesium ions (Mg²⁺). Glutamate binding alone can't open it. But if the postsynaptic cell is simultaneously depolarized (by other active synapses or by strong presynaptic input), the depolarization expels the Mg²⁺ block, allowing the channel to open. Calcium (Ca²⁺) flows in, triggering signaling cascades that strengthen the synapse.

[VIEW IMAGES: NMDA receptor showing voltage-dependent magnesium block mechanism]

The calcium influx activates multiple pathways. CaMKII (calcium/calmodulin-dependent protein kinase II) phosphorylates AMPA receptors, increasing their conductance and inserting additional receptors into the synapse. More AMPA receptors means larger responses to glutamate—synaptic strengthening. Early-phase LTP (lasting hours) relies on modifying existing proteins. Late-phase LTP (lasting days to weeks) requires gene transcription and protein synthesis, building new synaptic machinery. This transition from early to late phases parallels the transition from short-term to long-term memory, suggesting LTP is a cellular mechanism of memory storage.

Long-Term Depression and Spike-Timing-Dependent Plasticity

Not all plasticity strengthens synapses. Long-term depression (LTD) is a lasting decrease in synaptic strength, typically induced by prolonged low-frequency stimulation. LTD involves calcium influx through NMDA receptors (like LTP), but smaller, slower calcium elevations activate different enzymes—phosphatases instead of kinases—that remove AMPA receptors from synapses. LTP and LTD provide bidirectional control—synapses can be strengthened or weakened depending on the pattern of activity. This prevents runaway excitation (if only LTP existed, synapses would saturate at maximum strength) and enables flexible learning (strengthening relevant associations while weakening irrelevant ones).

[VIEW IMAGES: STDP showing how spike timing determines potentiation vs depression]

Spike-timing-dependent plasticity (STDP) is a refinement of Hebbian learning discovered in the 1990s. It turns out that mere co-activity isn't sufficient—temporal order matters. If the presynaptic neuron fires just before (within ~20 ms) the postsynaptic neuron fires, LTP occurs—the synapse strengthens. If the postsynaptic neuron fires before the presynaptic neuron, LTD occurs—the synapse weakens. This makes causal sense: if presynaptic activity consistently precedes postsynaptic activity, the synapse likely contributes to making the postsynaptic neuron fire and should be strengthened. If the temporal order is reversed, the synapse didn't contribute and should be weakened. STDP implements a sophisticated causality detector, learning predictive relationships from temporal correlations.

Learning Across Scales and Forms

Non-Associative Learning: The Simplest Forms

[VIEW IMAGES: Aplysia (sea slug) gill-withdrawal reflex used in Kandel's studies]

The simplest learning involves single stimuli changing response strength. Habituation is a decrease in response to repeated, innocuous stimulation—you stop noticing the hum of a refrigerator or the feeling of clothes on your skin. Habituation prevents wasting neural resources on irrelevant stimuli. Sensitization is an increase in response following a strong or noxious stimulus—after touching a hot stove, you become hyperresponsive to thermal stimuli. Eric Kandel won the 2000 Nobel Prize for elucidating the molecular mechanisms of habituation and sensitization in the sea slug Aplysia californica. Habituation involves decreased neurotransmitter release (fewer calcium channels open, less transmitter released). Sensitization involves increased neurotransmitter release (serotonin from modulatory neurons activates second messengers that enhance calcium influx). These simple forms of plasticity—occurring at single synapses—demonstrate that learning and memory begin at the molecular level.

Classical Conditioning: Learning Predictions

[VIEW IMAGES: Pavlov's apparatus and conditioning paradigm]

Classical (Pavlovian) conditioning is learning associations between stimuli. Russian physiologist Ivan Pavlov discovered that dogs would salivate not only to food (unconditioned stimulus, US) but also to stimuli consistently preceding food—a bell, a metronome, even the sight of the lab assistant (conditioned stimulus, CS). After repeated CS-US pairings, the CS alone elicits a conditioned response (CR)—salivation, anticipation, arousal. Classical conditioning implements predictive learning—organisms learn which stimuli predict important events (food, danger, mates), enabling preparatory responses. The NMDA receptor's coincidence detection provides a cellular mechanism: the CS activates presynaptic inputs; the US depolarizes the postsynaptic cell; coincident activity induces LTP, strengthening the CS pathway.

Modern theories view conditioning as prediction error learning. Rescorla and Wagner showed that learning occurs only when events are surprising—when the US is unexpected. If the CS already predicts the US perfectly, additional pairings produce no further learning. The animal has learned the predictive relationship; there's nothing left to learn. This parallels dopamine prediction error signals in the basal ganglia: dopamine neurons respond to unexpected rewards (prediction errors), not to fully predicted rewards. Classical conditioning and reinforcement learning share a computational core—both minimize prediction errors.

Instrumental Learning: Actions and Consequences

[VIEW IMAGES: Thorndike's puzzle box experiments demonstrating instrumental learning]

Instrumental (operant) conditioning is learning associations between actions and outcomes. Edward Thorndike placed cats in puzzle boxes; they'd thrash randomly until accidentally triggering the escape mechanism. Across trials, unsuccessful actions decreased while successful actions increased—his Law of Effect: behaviors followed by satisfying consequences are strengthened; those followed by annoying consequences are weakened. B.F. Skinner elaborated this into operant conditioning, demonstrating that animals learn complex behaviors through shaping—reinforcing successive approximations to a target behavior.

The basal ganglia implement instrumental learning through dopamine-modulated plasticity in the striatum. Direct pathway neurons (expressing D1 dopamine receptors) are strengthened by dopamine bursts, learning "this action led to reward—do it more." Indirect pathway neurons (expressing D2 dopamine receptors) are weakened by dopamine pauses, learning "this action led to no reward—do it less." Over trials, the basal ganglia learn action values—which actions are worth performing in which contexts. This is how habits form: initially, actions are goal-directed (you think about consequences); with repetition, they become habitual (automatic, stimulus-triggered). The transition from cortical (goal-directed) to striatal (habitual) control explains both skill mastery and addiction.

[VIEW IMAGES: Neural circuits distinguishing goal-directed vs habitual behavior]

Observational and Cognitive Learning

Not all learning requires direct experience. Observational learning (social learning, imitation) involves acquiring behaviors by watching others. Albert Bandura's famous Bobo doll experiments demonstrated that children imitate aggressive behaviors they observe, even without reinforcement. Mirror neurons in premotor cortex—neurons that fire both when performing an action and when observing someone else perform it—may support observational learning by mapping observed actions onto your own motor repertoire. Cognitive learning involves building mental models of the world—acquiring knowledge about spatial layouts, causal relationships, abstract concepts. These forms of learning transcend simple associations, requiring the flexible, relational memory systems we'll explore next.

Learning Beyond the Individual: Deep Time and Culture

Evolution as Learning: Genes Remember

[VIEW IMAGES: Baldwin effect showing how learning can guide evolution]

Here's a disorienting thought: your genome is a memory system. Not metaphorically—literally. Every gene you carry is a solution to a problem your ancestors faced, tested across millions of iterations, written in molecular code. That you flinch from snakes before consciously recognizing them? That's a 100-million-year-old memory from when your mammalian ancestors were small and serpents were large. That infants prefer face-like patterns within hours of birth? That's genetic memory of what matters for survival—find the caregivers. Carl Jung called them archetypes—universal symbols and patterns appearing across cultures. He thought they were inherited psychological structures, and neuroscience suggests he wasn't entirely wrong. Not because ideas are genetically transmitted, but because brains shaped by similar evolutionary pressures develop similar intuitions, fears, and attractions.

Natural selection is learning by death—organisms that solve problems (finding food, avoiding predators, attracting mates) leave more offspring; those that fail are deleted. Evolution writes successful solutions into DNA, the ultimate long-term memory lasting millions of years. The timescale is glacial—genetic change across generations—but the algorithm is identical to synaptic learning: vary (mutation), select (survival), retain (heredity). The Baldwin effect shows how individual learning can accelerate evolution: if learning a skill improves survival, individuals with genetic variations making that skill easier to learn will be favored, eventually making the skill innate. This is how learning and evolution dance together—neural plasticity discovers solutions quickly; genetic change encodes them permanently.

Lamarck's Revenge: Epigenetics and Inherited Experience

[VIEW IMAGES: Epigenetic modifications showing DNA methylation patterns]

Jean-Baptiste Lamarck, the early 19th-century French naturalist, proposed that organisms could pass acquired characteristics to offspring—giraffes stretching their necks would have longer-necked children. Darwin's natural selection replaced Lamarckian inheritance in biology's heart, and for 150 years, Lamarck was the punchline of textbook jokes. Except—and here's where biology gets deliciously complicated—Lamarck was partially right. Not about neck-stretching, but about experience modifying heredity. The mechanism is epigenetics—chemical modifications to DNA (methylation) or histones (the proteins DNA wraps around) that change which genes are expressed without altering the genetic sequence itself. And here's the startling part: some epigenetic marks can be inherited.

Studies of Dutch Hunger Winter survivors (1944-45 famine) revealed that children of pregnant women who endured starvation had altered metabolism and increased disease risk—effects persisting into grandchildren. Trauma studies in Holocaust survivors suggest stress-related epigenetic changes may transmit across generations. In mice, fear conditioning produces epigenetic changes in sperm that make offspring more sensitive to the conditioned odor—inherited memory of ancestral danger. This is neo-Lamarckianism: experience modifies gene expression; those modifications can sometimes be inherited. The effects are subtle, time-limited (usually disappearing after a few generations), and controversial—not all studies replicate, mechanisms are debated. But the principle is clear: the boundary between individual experience and hereditary information is porous. Your neurons aren't the only things learning from experience; your genome keeps a shorter-term ledger too.

[VIEW IMAGES: Transgenerational epigenetic effects across multiple generations]

Cultural Memory: Outsourcing Storage to Society

[VIEW IMAGES: Oral traditions and cultural transmission across generations]

Biological memory—synaptic and genetic—is constrained by the individual lifespan and the flesh that carries it. But humans discovered a trick that changed everything: externalized memory. Language lets one brain share patterns with another brain, transmitting solutions across individuals and generations without waiting for genetic change. An elder who discovers that certain mushrooms are poisonous can tell the young, encoding the knowledge in phonemes and grammar rather than DNA and natural selection. The information transfer is Lamarckian—acquired knowledge passed directly to descendants—but the medium is cultural rather than genetic.

Oral traditions preserved complex knowledge for millennia before writing existed. Australian Aboriginal songlines encode geographical information, navigation routes, resource locations, and histories spanning 40,000+ years—the longest continuous cultural memory on Earth. Stories function as mnemonic devices: embedding practical information in narrative makes it memorable, emotionally resonant, resistant to corruption. When writing emerged 5,000 years ago, it was humanity's first persistent, external neural backup—clay tablets as cortical archives. Now books, databases, and the internet form an exocortex, a collective memory system orders of magnitude larger than any individual brain.

Memes: Ideas as Replicators

[VIEW IMAGES: Meme theory and cultural transmission diagrams]

Richard Dawkins coined "meme" in 1976 (long before internet cat pictures) to describe units of cultural transmission—ideas, behaviors, styles—that replicate by jumping from brain to brain through imitation, teaching, or communication. Memes are to culture what genes are to biology: replicators competing for transmission and persistence. A catchy tune, a religious belief, a fashion trend, a scientific theory—all memes, spreading through populations, mutating, being selected for "fitness" (how well they propagate). Some memes enhance their hosts' biological fitness (agricultural techniques, medical knowledge). Others reduce it but are excellent at spreading (ideologies demanding self-sacrifice, addictive behaviors). Memes don't care about your wellbeing—only their propagation.

This might sound abstract until you realize you're currently hosting thousands of memes—words, concepts, skills, beliefs, preferences—most acquired from others, not discovered independently. Your personality is partly an ecosystem of memes competing for expression. Memetics applies evolutionary thinking to culture: ideas have variation (mutation, recombination), selection (some spread more than others), and heredity (transmitted with varying fidelity). The parallel to neural learning is striking—Hebbian learning strengthens neural connections based on co-activity; memetic transmission strengthens ideas based on co-occurrence in social networks. Your brain is simultaneously shaped by genetic evolution (millions of years), synaptic plasticity (milliseconds to days), and memetic evolution (generations to seconds in the internet age).

Gossip and Social Learning: The Knowledge Network

[VIEW IMAGES: Social learning networks and information transmission]

Why do humans spend so much time talking about people who aren't present? Robin Dunbar proposes that gossip—seemingly frivolous social chatter—evolved as a scalable way to maintain group cohesion and share social knowledge. Primates maintain social bonds through grooming, but grooming doesn't scale beyond ~50 individuals (you can only groom one at a time). Language lets you "groom" multiple individuals simultaneously while transmitting information about who's trustworthy, who's dangerous, who cheated, who helped. Gossip is distributed social learning—each person acts as a node in a knowledge network, collecting and sharing information about social norms, alliances, threats, and opportunities.

Humans are obligate social learners—we acquire most knowledge from others rather than through individual trial and error. This is computationally efficient: why test every berry for toxicity when someone else already did the experiment? But it creates vulnerability—we inherit not just useful knowledge but misinformation, superstitions, biases. Social learning strategies have evolved: copy successful individuals (prestige bias), copy the majority (conformity bias), copy kin (nepotistic transmission). These biases shape which memes spread, creating cultural evolutionary dynamics that can run faster than genetic evolution but slower than individual learning. Fashion changes yearly; languages shift over centuries; moral intuitions evolve over millennia. Each timescale is a distinct memory system.

The integration of these memory systems—genetic, epigenetic, neural, cultural—creates cumulative cultural evolution, the ratchet that built civilization. Each generation inherits not just genes but knowledge, adding incremental improvements that persist. No single human could invent agriculture, writing, antibiotics, smartphones from first principles. These are collective achievements, the product of millions of brains across thousands of generations, each contributing small modifications to inherited designs. Your brain contains billions of synapses shaped by your experience, millions of genes shaped by evolution, and thousands of memes shaped by culture. You are a multi-timescale memory system, simultaneously ancient and novel, individual and collective—a temporary pattern in matter that remembers and transmits patterns across scales from milliseconds to millennia.

[VIEW IMAGES: Cumulative cultural evolution and technological ratchet effect]

Memory Systems: Architecture of Storage

Multiple Memory Systems: Declarative vs. Procedural

[VIEW IMAGES: Taxonomy of memory systems showing major divisions]

The brain contains multiple memory systems, each specialized for different types of information and supported by different neural structures. The fundamental division is between declarative memory (explicit, conscious, "knowing that") and procedural memory (implicit, unconscious, "knowing how"). Declarative memory includes episodic memory (personal experiences, events in context—your first day of school, what you had for breakfast) and semantic memory (factual knowledge, concepts—Paris is the capital of France, birds have feathers). Procedural memory includes motor skills (riding a bike, playing piano), perceptual skills (reading mirror-reversed text), and cognitive skills (mental arithmetic, chess strategies). The systems differ in their neural substrates, their accessibility to consciousness, and their flexibility.

The distinction was revealed by patient H.M., perhaps neuroscience's most famous case. In 1953, to treat intractable epilepsy, surgeon William Scoville removed H.M.'s medial temporal lobes bilaterally, including most of his hippocampus. The surgery controlled his seizures but produced profound amnesia. Brenda Milner discovered that H.M. couldn't form new declarative memories—he forgot conversations minutes after they ended, never learned where he lived, didn't recognize people who cared for him daily. Yet his procedural learning was intact: he learned motor skills (mirror drawing, rotary pursuit) at normal rates, improving across days despite having no conscious memory of practicing. This double dissociation—impaired declarative memory with preserved procedural memory—demonstrated that memory is not unitary but comprises distinct systems.

[VIEW IMAGES: H.M.'s brain showing bilateral medial temporal lobe resection]

Episodic Memory: The Hippocampal Time Machine

[VIEW IMAGES: Hippocampal structure and place cell activity patterns]

The hippocampus is essential for episodic memory—memories of events in their spatial and temporal context. Lesions produce anterograde amnesia (inability to form new episodic memories) and temporally-graded retrograde amnesia (loss of recent memories with relative sparing of remote memories). This pattern suggests the hippocampus is necessary for encoding and initial storage but not for permanent storage—memories are eventually transferred elsewhere. John O'Keefe discovered place cells in the rat hippocampus—neurons that fire when the animal occupies specific locations, creating a neural map of space. Edvard and May-Britt Moser discovered grid cells in entorhinal cortex—neurons that fire in multiple locations forming a hexagonal grid, providing a metric coordinate system for navigation. O'Keefe and the Mosers shared the 2014 Nobel Prize for discovering the brain's "inner GPS."

But the hippocampus does more than map space—it constructs episodic memories by binding together distributed cortical representations of an event's components (who, what, where, when). The hippocampus rapidly encodes associations between arbitrary elements, creating unique representations of individual events (pattern separation) while linking related events (pattern completion). During sleep—especially slow-wave sleep—hippocampal activity replays recent experiences, often in fast-forward. This replay is thought to drive systems consolidation, gradually transferring memories from hippocampus to cortex for long-term storage.

[VIEW IMAGES: Hippocampal replay during sleep showing reactivation patterns]

Semantic Memory: Building Conceptual Knowledge

Semantic memory—your knowledge of facts, concepts, meanings—is organized differently than episodic memory. You know Paris is the capital of France, but you probably don't remember when or where you learned this. Semantic knowledge becomes decontextualized—abstracted from the episodes in which it was learned. Patients with hippocampal damage can't form new episodic memories but gradually accumulate semantic knowledge, suggesting hippocampal-independent learning mechanisms. Neocortical areas, especially anterior and lateral temporal cortex, store semantic knowledge in distributed networks where concepts are represented by patterns of activity across neurons tuned to different features.

[VIEW IMAGES: Semantic network organization showing hierarchical concept representations]

How does semantic memory acquire structure—categories, hierarchies, similarities? Distributed representations provide the answer. If "dog" activates neurons tuned for

Consolidation: From Hippocampus to Cortex

The standard consolidation model proposes that episodic memories are initially encoded in the hippocampus and distributed neocortical regions. The hippocampus binds the neocortical components—seeing a face activates fusiform face area, hearing a name activates auditory cortex, sensing an emotion activates amygdala; the hippocampus links them. Over time—days to years—memories are consolidated into neocortex through repeated hippocampal-cortical dialogue, especially during sleep. Eventually, cortical connections strengthen enough to support retrieval without hippocampal involvement. This explains temporally-graded retrograde amnesia: recent memories (still hippocampus-dependent) are lost; remote memories (fully consolidated into cortex) are preserved.

[VIEW IMAGES: Systems consolidation model showing gradual memory transfer]

However, some episodic memories—vivid, detailed recollections of specific events—seem to retain hippocampal dependence indefinitely. This has led to competing theories: multiple trace theory proposes that each retrieval creates a new hippocampal trace, so frequently retrieved memories leave multiple traces (some vulnerable, some preserved). Transformation theory proposes that consolidated memories lose episodic detail and become more semantic—you remember the gist but not the specifics. The debate continues, but all agree: consolidation is an active, time-dependent process, not passive storage. Memories are dynamic, reconstructed at retrieval, and modified by subsequent experience.

Working Memory: The Cognitive Workspace

Working Memory vs. Short-Term Memory

[VIEW IMAGES: Baddeley's working memory model showing multiple components]

Short-term memory is a temporary storage buffer—hold a phone number long enough to dial it, then forget. Working memory is an active workspace where information is maintained AND manipulated for cognitive tasks. Alan Baddeley and Graham Hitch proposed a multi-component working memory model comprising: (1) the phonological loop (verbal/acoustic information—rehearsing phone numbers, inner speech), (2) the visuospatial sketchpad (visual/spatial information—mental rotation, imagining routes), and (3) the central executive (attentional control, coordinating the subsystems, updating and manipulating information). Later, they added the episodic buffer (integrating information from different modalities and linking working memory with long-term memory).

Working memory capacity is famously limited. George Miller's 1956 paper "The Magical Number Seven, Plus or Minus Two" suggested humans can hold about 7 items in working memory. More recent estimates suggest the true capacity is closer to 4±1 chunks. This capacity limit has profound implications: it constrains reasoning, problem-solving, learning, and comprehension. When working memory is overloaded, performance collapses. Individual differences in working memory capacity predict fluid intelligence, academic achievement, and attentional control.

Neural Mechanisms: Persistent Activity in Prefrontal Cortex

[VIEW IMAGES: Prefrontal neurons showing sustained delay-period activity]

How does the brain maintain information in working memory? Classic studies by Patricia Goldman-Rakic recorded from monkey prefrontal cortex during delayed-response tasks: show a cue (e.g., a dot at a particular location), wait a delay (several seconds), then the monkey must respond to the remembered location. During the delay—with no external stimulus—prefrontal neurons fire persistently, maintaining a neural representation of the cue's location. This persistent activity is thought to be the neural substrate of working memory: recurrent excitatory connections within prefrontal networks sustain activity, keeping information "online" for cognitive processing.

But persistent activity is metabolically expensive and fragile—a distraction can collapse it. Recent theories propose activity-silent working memory: information is encoded in short-term synaptic modifications (e.g., facilitated synapses, calcium-dependent changes) rather than sustained firing. When needed, a "read-out" signal reactivates the latent representation. This "activity-silent" storage is more robust to distraction and less energetically costly. Working memory likely uses both mechanisms—persistent activity for actively maintained information, synaptic traces for passively stored information.

[VIEW IMAGES: Frontoparietal networks supporting working memory maintenance]

Working Memory as Interface to Executive Function

Working memory is not just a passive buffer—it's the workspace of thought. It holds the premises while you reason toward a conclusion. It maintains the goal while you plan steps to achieve it. It keeps track of subgoals during complex tasks. Working memory interfaces with long-term memory (retrieving relevant knowledge) and with executive control systems (updating, inhibiting, switching). The central executive component of Baddeley's model overlaps heavily with executive function—processes that regulate thought and action, including planning, cognitive flexibility, inhibitory control, and error monitoring.

Prefrontal cortex—especially dorsolateral prefrontal cortex (dlPFC)—implements working memory and executive control. Lesions produce dysexecutive syndrome: impaired planning, perseveration (repeating unsuccessful actions), poor inhibitory control, distractibility. Patients can describe correct behavior but fail to execute it—they lack the online maintenance and control processes that keep goals active and regulate behavior accordingly. Working memory capacity and executive function are closely related, perhaps inseparable—both reflect the ability to maintain and manipulate goal-relevant information in the face of distraction and competing demands.

[PREVIEW: Executive function and cognitive control in prefrontal cortex]

Thought Questions for Discussion

Memory Across Timescales—Who Owns the Pattern? You are simultaneously a carrier of genetic memories (millions of years old), epigenetic marks (potentially from your grandparents' experiences), neural memories (formed throughout your life), and cultural memes (some ancient, some hours old). When you feel inexplicably anxious near snakes, follow a family tradition, remember your first day of school, or quote a TikTok video, different memory systems operating at different timescales are expressing themselves through you. Which of these is most "you"? If your cultural and genetic memories sometimes conflict (e.g., evolutionary food preferences vs. cultural dietary norms), which should win? Are you a unified agent, or a temporary coalition of memory systems that occasionally disagree?

The Paradox of Learning Without Memory (H.M. and Procedural Learning): Patient H.M. could learn new motor skills but had no conscious memory of practicing them. Each day he would improve at mirror drawing, yet claim he'd never done the task before. This dissociation reveals that learning and memory are not identical—you can learn without remembering. What does this tell us about consciousness and skill? If H.M. can acquire complex skills without conscious awareness, what role does consciousness play in learning? Could experts who perform "automatically" (musicians, athletes) be accessing procedural memories that never required conscious encoding?

The Forgetting Paradox: Is Memory Failure a Bug or a Feature? We typically view forgetting as a failure—something to be minimized or overcome. But consider: if you remembered every experience in perfect detail (like patients with hyperthymesia), would this be beneficial or debilitating? They report being overwhelmed by memories, struggling to extract general principles from specific episodes. Perhaps forgetting is adaptive—allowing you to extract regularities (semantic knowledge) by discarding episodic details. Is forgetting a design feature that enables generalization, or a cost we pay for having finite neural resources?

Working Memory Capacity and Intelligence: Cause or Correlation? Working memory capacity strongly predicts fluid intelligence and academic achievement. But is large working memory capacity causing higher intelligence, or are both caused by some third factor (e.g., efficient neural processing, attention control)? Consider: training can modestly improve working memory performance, but does this transfer to improved intelligence or only to the trained tasks? If we could dramatically expand working memory capacity (through neural augmentation or brain-computer interfaces), would we become proportionally more intelligent, or would we encounter other bottlenecks?

Practice Questions:

• The cerebellum implements _______ learning using _______ fibers that carry error signals to modify synapses between parallel fibers and _______ cells.

• The basal ganglia learn through _______ learning; dopamine neurons signal _______ errors—bursts for unexpected rewards, pauses for omitted rewards.

• Hebb's postulate states that synapses strengthen when presynaptic and postsynaptic neurons are _______ active; this is summarized as "cells that fire together, _______."

• Long-term potentiation (LTP) requires activation of _______ receptors, which act as coincidence detectors requiring both glutamate binding and postsynaptic _______.

• NMDA receptors are normally blocked by _______ ions; depolarization expels this block, allowing _______ influx that triggers LTP.

• Spike-timing-dependent plasticity (STDP) strengthens synapses when the _______ neuron fires before the _______ neuron, implementing a causality detector.

• _______ is decreased response to repeated innocuous stimulation; _______ is increased response following strong or noxious stimulation.

• Classical conditioning pairs a conditioned stimulus (CS) with an _______ stimulus (US); learning occurs when the US is _______, not when fully predicted.

• Instrumental learning follows the Law of _______: behaviors followed by _______ consequences are strengthened; those followed by annoying consequences are weakened.

• Natural selection can be understood as learning by _______; the _______ effect shows how individual learning can accelerate evolution.

• _______ refers to chemical modifications (like DNA methylation) that change gene expression without altering the genetic sequence; some marks can be _______ inherited.

• Richard Dawkins coined the term _______ to describe units of cultural transmission that replicate by jumping from _______ to _______.

• _______ cultural evolution is the process where each generation inherits knowledge and adds incremental improvements, building civilization through _______ contributions.

• Declarative memory includes _______ memory (personal experiences) and _______ memory (factual knowledge); both require the _______.

• Patient H.M. had bilateral _______ lobe resection, producing profound _______ amnesia (inability to form new memories) with preserved _______ memory (motor skills).

• Hippocampal _______ cells fire when an animal occupies specific locations; _______ cells in entorhinal cortex fire in hexagonal grid patterns.

• _______ consolidation is the gradual transfer of memories from _______ to neocortex over time, especially during _______.

• Baddeley's working memory model includes the _______ loop (verbal information), the _______ sketchpad (visual/spatial information), and the _______ executive (attentional control).

• Working memory capacity is limited to about _______±1 chunks; persistent activity in _______ cortex maintains information during delays.

📝 View Answer Key for Chapter 10

[PREVIEW: Executive function systems and prefrontal cortex organization]